Unit - 4

Orthogonal complements

Q1) What are orthogonal complements?

A1)

Let V be an inner product space. A subset of V is an orthonormal basis for V if it is an ordered basis that is orthonormal.

Let S be a subset of an inner product space V. The orthogonal complement of S, denoted by  (read ‘‘S perp’’) consists of those vectors in V that are orthogonal to every vector u

(read ‘‘S perp’’) consists of those vectors in V that are orthogonal to every vector u  S; that is,

S; that is,

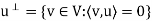

In particular, for a given vector u in V, we have

That is  consists of all vectors in V that are orthogonal to the given vector u.

consists of all vectors in V that are orthogonal to the given vector u.

We show that  is a subspace of V. Clearly 0

is a subspace of V. Clearly 0

, because 0 is orthogonal to every vector in V. Now suppose v, w

, because 0 is orthogonal to every vector in V. Now suppose v, w

. Then, for any scalars a and b and any vector u

. Then, for any scalars a and b and any vector u  S, we have

S, we have

Thus, av + bw

, and therefore S? is a subspace of V.

, and therefore S? is a subspace of V.

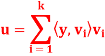

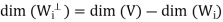

Q2) Let W be a finite-dimensional subspace of an inner product space V, and let y ∈ V. Then there exist unique vectors u ∈ W and z ∈ such that y = u + z. Furthermore, if {

such that y = u + z. Furthermore, if { } is an orthonormal basis for W, then

} is an orthonormal basis for W, then

A2)

Let { } be an orthonormal basis for W, let u be as defined in the preceding equation, and let z = y − u. Clearly u ∈ W and y = u + z.

} be an orthonormal basis for W, let u be as defined in the preceding equation, and let z = y − u. Clearly u ∈ W and y = u + z.

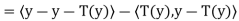

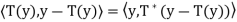

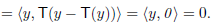

To show that z ∈ ⊥, it suffices to show, that z is orthogonal to each

⊥, it suffices to show, that z is orthogonal to each  . For any j, we have

. For any j, we have

To show uniqueness of u and z, suppose that y = u + z = u’ + z’ where

U’ ∈ W and z’ ∈ W⊥. Then u – u’’ z’ − z ∈ W ∩ = {0 }. Therefore,

= {0 }. Therefore,

u = u’ and z = z’.

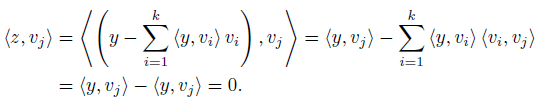

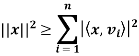

Q3) What is Bessel’s inequality?

A3)

Bessel’s inequality: Let V be an inner product space, and let S = {v1, v2, . . . , vn} be an orthonormal subset of V. For any x ∈ V we have

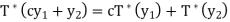

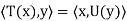

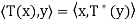

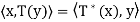

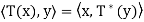

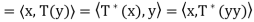

Q4) Let V be a finite-dimensional inner product space, and let T be a linear operator on V. Then there exists a unique function T∗ : V → V such that  for all x, y ∈ V. Furthermore, T∗ is linear.

for all x, y ∈ V. Furthermore, T∗ is linear.

A4)

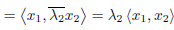

Let y ∈ V. Define g: V → F by g(x) = for all x ∈ V. We

for all x ∈ V. We

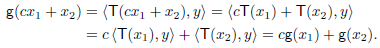

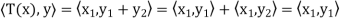

First show that g is linear. Let x1, x2 ∈ V and c ∈ F. Then

Hence g is linear.

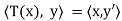

To obtain a unique vector y’∈ V such that

g(x) =  ; that is,

; that is, for all x ∈ V. Defining T∗ : V → V

for all x ∈ V. Defining T∗ : V → V

By  (y) = y_, we have

(y) = y_, we have  .

.

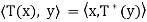

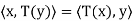

To show that  is linear, let y1, y2 ∈ V and c ∈ F. Then for any x ∈ V,

is linear, let y1, y2 ∈ V and c ∈ F. Then for any x ∈ V,

We have

Since x is arbitrary,

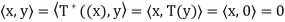

Finally, we need to show that  is unique. Suppose that U: V → V is linear and that it satisfies

is unique. Suppose that U: V → V is linear and that it satisfies  for all x, y ∈ V. Then

for all x, y ∈ V. Then  for all x, y ∈ V, So

for all x, y ∈ V, So  = U.

= U.

The linear operator T∗ described in this theorem is called the adjoint of the operator T. The symbol  is read “T star.”

is read “T star.”

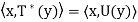

Thus T∗ is the unique operator on V satisfying  for

for

All x, y ∈ V. Note that we also have

So  for all x, y ∈ V.

for all x, y ∈ V.

For an infinite-dimensional inner product space, the adjoint of a linear operator

T may be defined to be the function T∗ such that  for all x, y ∈ V, provided it exists.

for all x, y ∈ V, provided it exists.

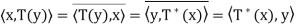

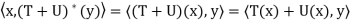

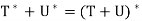

Q5) Let V be an inner product space, and let T and U be linear operators on V. Then

(a)  =

=

(b)  =

=  for any c ∈ F;

for any c ∈ F;

(c)  =

=

(d)  = T

= T

(e)  = I.

= I.

A5)

Here we prove (a) and (d); the rest are proved similarly. Let x, y ∈ V.

(a) Because

has the property unique to

has the property unique to  . Hence

. Hence

(d) Similarly, since

Q6) What is least square method?

A6)

Suppose a survey conducted by taking two measurements such as  at times

at times  respectively.

respectively.

For example the surveyor wants to measure the birth rate at various times during a given period.

Suppose the collected data set  is plotted as points in the plane.

is plotted as points in the plane.

From this plot, the surveyor finds that there exists a linear relationship between the two variables, y and t, say- y = ct + d, and would like to find the constants c and d so that the line y = ct + d represents the best possible fit to the data collected. One such estimate of fit is to calculate the error E that represents the sum of the squares of the vertical distances from the points to the line; that is,

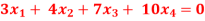

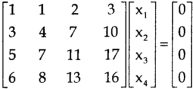

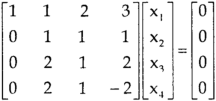

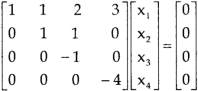

Q7) Find the solution of the following homogeneous system of linear equations,

A7)

The given system of linear equations can be written in the form of matrix as follows,

Apply the elementary row transformation,

, we get,

, we get,

, we get

, we get

Here r(A) = 4, so that it has trivial solution,

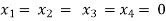

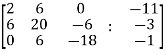

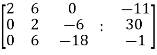

Q8) Check whether the following system of linear equations is consistent of not.

2x + 6y = -11

6x + 20y – 6z = -3

6y – 18z = -1

A8)

Write the above system of linear equations in augmented matrix form,

Apply  , we get

, we get

Apply

Here the rank of C is 3 and the rank of A is 2

Therefore both ranks are not equal. So that the given system of linear equations is not consistent.

Q9) What do you understand by minimal Solutions to Systems of Linear Equations?

A9)

Even when a system of linear equations Ax = b is consistent, there may be no unique solution. In such cases, it may be desirable to find a solution of minimal norm. A solution s to Ax = b is called a minimal solution if ||s||  for all other solutions u. The next theorem assures that every consistent system of linear equations has a unique minimal solution and provides a method for computing it.

for all other solutions u. The next theorem assures that every consistent system of linear equations has a unique minimal solution and provides a method for computing it.

Q10) Define normal.

A10)

Let V be an inner product space, and let T be a linear operator on V. We say that T is normal if T =

=  T. An n × n real or complex matrix A is normal if A

T. An n × n real or complex matrix A is normal if A =

=  A.

A.

Note- T is normal if and only if  is normal, where

is normal, where is an orthonormal basis.

is an orthonormal basis.

For example: Suppose that A is a real skew-symmetric matrix; that is,  = −A. Then A

= −A. Then A

Is normal because both A and

and  A are equal to −

A are equal to − .

.

Q11) Let T be a self-adjoint operator on a finite-dimensional inner product space V. Then

(a) Every eigenvalue of T is real.

(b) Suppose that V is a real inner product space. Then the characteristic polynomial of T splits.

A11)

(a) Suppose that T(x) =  x for x _= 0 . Because a self-adjoint operator is also normal

x for x _= 0 . Because a self-adjoint operator is also normal

x = T(x) =

x = T(x) =  x) =

x) =  x.

x.

So λ = ; that is,

; that is, is real.

is real.

(b) Let n = dim(V),  be an orthonormal basis for V, and A =

be an orthonormal basis for V, and A =  . Then A is self-adjoint. Let

. Then A is self-adjoint. Let  be the linear operator on

be the linear operator on  defined by

defined by  = Ax for all x ∈

= Ax for all x ∈ . Note that

. Note that  is self-adjoint because

is self-adjoint because  = A, where γ is the standard ordered (orthonormal) basis for

= A, where γ is the standard ordered (orthonormal) basis for  , So, by (a), the eigenvalues of

, So, by (a), the eigenvalues of  are real. By the fundamental theorem of algebra,

are real. By the fundamental theorem of algebra,

The characteristic polynomial of  splits into factors of the form t −

splits into factors of the form t −  . Since each

. Since each  is real, the characteristic polynomial splits over R. But

is real, the characteristic polynomial splits over R. But  has the same characteristic polynomial as A, which has the same characteristic polynomial as T. Therefore the characteristic polynomial of T splits.

has the same characteristic polynomial as A, which has the same characteristic polynomial as T. Therefore the characteristic polynomial of T splits.

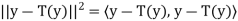

Q12) Let V be an inner product space, and let T be a linear operator on V. Then T is an orthogonal projection if and only if T has an adjoint  and

and  = T =

= T =  .

.

A12)

Suppose that T is an orthogonal projection. Since  = T because T is a projection, we need to show that T∗ exists and T =

= T because T is a projection, we need to show that T∗ exists and T =  . Now V = R(T) ⊕ N(T) and R

. Now V = R(T) ⊕ N(T) and R = N(T). Let x, y ∈ V. Then we can write x = x1 + x2 and y = y1 + y2, where x1, y1 ∈ R(T) and x2, y2 ∈ N(T). Hence

= N(T). Let x, y ∈ V. Then we can write x = x1 + x2 and y = y1 + y2, where x1, y1 ∈ R(T) and x2, y2 ∈ N(T). Hence

And

for all x, y ∈ V; thus

for all x, y ∈ V; thus  exists and T =

exists and T =  .

.

Now suppose  then T is a projections, hence we must show thatR(T) = N

then T is a projections, hence we must show thatR(T) = N and R

and R

Let x ∈ R(T) and y ∈ N(T). Then x = T(x) = , and so

, and so

Therefore x ∈ N , from which it follows that R(T) ⊆ N

, from which it follows that R(T) ⊆ N .

.

Let y ∈ N . We must show that y ∈ R(T), that is, T(y) = y. Now

. We must show that y ∈ R(T), that is, T(y) = y. Now

Since y − T(y) ∈ N(T), the first term must equal zero. But also

Thus y − T(y) = 0; that is, y = T(y) ∈ R(T). Hence R(T) = N

Q13) State and prove spectral theorem.

A13)

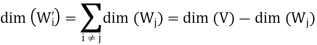

Let that T is a linear operator on a finite-dimensional inner product space V over F with the distinct eigenvalues  . Assume that T is normal if F = C and that T is self-adjoint if F = R. For each i (1 ≤ i ≤ k), let Wi be the eigenspace of T corresponding to the eigenvalue

. Assume that T is normal if F = C and that T is self-adjoint if F = R. For each i (1 ≤ i ≤ k), let Wi be the eigenspace of T corresponding to the eigenvalue  , and let

, and let  be the orthogonal projection of V on

be the orthogonal projection of V on . Then the following statements are true.

. Then the following statements are true.

(a) V = W1 ⊕ W2 ⊕ ·· · ⊕ Wk.

(b) If  denotes the direct sum of the subspaces

denotes the direct sum of the subspaces  for j

for j  i, then

i, then

(c)

=

=  for 1 ≤ i, j ≤ k.

for 1 ≤ i, j ≤ k.

(d) I =  +

+  + · · · +

+ · · · +  .

.

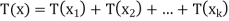

(e) T =  +

+  + · · · +

+ · · · +

Proof:

(a) As we know that T is diagonalizable,

So that,

V = W1 ⊕ W2 ⊕ ·· · ⊕ Wk

(b)

If x ∈ and y ∈

and y ∈ for some I is not equals to j, then

for some I is not equals to j, then  = 0.

= 0.

It follows easily from this result that

From first result, we have

On the other hand, we have

Hence

Proof of (d)-

Since Ti is the orthogonal projection of V on  , it follows from

, it follows from

(b) that N( ) = R(

) = R( =

=

Hence, for x ∈ V, we have x = x1 + x2 + · · · + xk, where  (x) =

(x) =  ∈

∈ .

.

Now we will prove the last result,

For x ∈ V, write x = x1 + x2 + · · · + xk, where xi ∈ . Then

. Then

+

+  + · · · +

+ · · · +

(x) +

(x) +  (x) + · · · +

(x) + · · · +  (x)

(x)

+

+  + · · · +

+ · · · +  )x

)x

The set { ,

,  , . . . ,

, . . . ,  } of eigenvalues of T is called the spectrum of T, the sum I =

} of eigenvalues of T is called the spectrum of T, the sum I =  +

+ +· · ·+

+· · ·+ in (d) is known as resolution of the identity operator induced by T, and the sum T =

in (d) is known as resolution of the identity operator induced by T, and the sum T =  +

+  + · · · +

+ · · · +  in (e) is called the spectral decomposition of T. The spectral decomposition of T is unique up to the order of its eigenvalues.

in (e) is called the spectral decomposition of T. The spectral decomposition of T is unique up to the order of its eigenvalues.

Q14) Give some general properties of normal operators.

A14)

Let V be an inner product space, and let T be a normal operator on V. Then the following statements are true.

(a) ||T(x)|| = || (x)|| for all x ∈ V.

(x)|| for all x ∈ V.

(b) (b) T − cI is normal for every c ∈ F.

(c) If x is an eigenvector of T, then x is also an eigenvector of T∗. In fact, if T(x) = λx, then  (x) =

(x) =  x.

x.

(d) If  and

and  2 are distinct eigenvalues of T with corresponding eigenvectors x1 and x2, then x1 and x2 are orthogonal.

2 are distinct eigenvalues of T with corresponding eigenvectors x1 and x2, then x1 and x2 are orthogonal.

Proof:

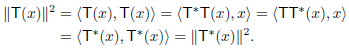

(a) For any x ∈ V, we have

(b) Do yourself

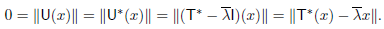

(c) Suppose that T(x) =  x for some x ∈ V. Let U = T −

x for some x ∈ V. Let U = T − I. Then U(x) = 0, and U is normal by (b). Thus (a) implies that

I. Then U(x) = 0, and U is normal by (b). Thus (a) implies that

(d) Let  1 and

1 and  2 be distinct eigenvalues of T with corresponding eigenvectors x1 and x2. Then, using (c), we have

2 be distinct eigenvalues of T with corresponding eigenvectors x1 and x2. Then, using (c), we have

Since  =

=  we conclude that

we conclude that  = 0.

= 0.