Unit 5

Virtualization

Virtualization

Virtualization is a technique used for creating a virtual platform of storage devices and the server OS. Virtualization helps the user to make use of multiple machines sharing one single physical device of any resource across the network of other users respectively using their machines. Cloud virtualization provides the conventional computing methods and the workload management is more efficient, economic and scalable.

Virtualization in Cloud computing is being integrated and changing the fundamental course of computing. One of the most important feature of virtualization is the way it helps the sharing of applications across a network thread of multiple companies and users.

Cloud computing used for being a service or an application that assists a virtualized ecosystem. This ecosystem may be private or public. With virtualization resources can be maximized therefore reducing the need for a physical system.

Types of Virtualization

The basic concept of Virtualization is from dates back to the 1960s that time mainframe computers started being the norm. From the last 10 to 15 years have seen this concept introduced as the standard. Currently most servers are readily virtualized. But there are multiple types of virtualization, mostly in the context of cloud computing. They are:

1) Operating System Virtualization

In Cloud Computing, operating system Virtualization is where the VM software installs the host operating system is opposed to being installed directly on the hardware. One of the fundamental uses of OS virtualization is for testing applications on different operating system and platforms. The software is virtually present in the hardware. It supports the different examples of the application to run on.

2) Hardware Virtualization

In Cloud Computing, hardware virtualization is used in server platforms since it offers more flexibility as opposed to physical machines. In hardware virtualization, VM software gets installed within the hardware system, known as hardware virtualization.

It comprises a hypervisor to control and monitor the process, hardware resources and memory of the system. After the completion of the hardware virtualization process, the appropriate user can install a different OS in it and different applications can be used simultaneously.

3) Server Virtualization

In this type of virtualization the software is installed directly on the server system. The single physical server split into multiple servers depending on the demand its managing and the load that is being processed. Server virtualization masks server resources that hold identity and number. For summarization the installed software is dividing the physical server into its integral virtual counterparts.

4) Storage Virtualization

Storage Virtualization considered in Cloud Computing as the grouping of physical storages that are composed of various network storage devices. The grouping is perform in a way that looks like a single storage unit. With the help of software applications, storage virtualization is used for backup and recovery processes.

Benefits of Virtualization

Following are benefits of virtualization in Cloud Computing.

1) Security

Security is most important aspects of adopting virtualization since it is a recurrent concern. The security is provided through firewalls that help in preventing any unauthorized access and also keeping the data safe and confidential.

The firewall provide the security from other cyber threats or virus attacks. The protocols include encryption that automatically protects data from the other threads. The users can easily virtualize their data and create a backup of the same data on a separate server if they feel the requirement.

2) Flexibility in operations

With virtualization, professionals can work easily and therefore the process is more streamlined and agile. The network switch which is implemented presently creates easy access and use which also saves time.

Virtualization helps find out and solve technical errors happening in anyone of the connected devices. It also eradicates the matter of retaining or recovering any lost data because of any corrupted device or a crashed one. Thus it dynamically improves ROI and saves a lot of your time.

3) Economical agility

It is the first reasons for a faster adoption rate of virtualization. This technique may save companies from spending a fortune on physical devices and servers. With the virtual environment, data is stored on the virtual servers.

It impacts on there lentless use of electricity. There are multiple physical servers and devices for reducing the bills while running multiple instances of an operating system and applications across the network of users and companies.

4) Streamlining system failure

When we want to perform any tasks, chances are that the system might malfunction at a critical time. Such failure has been detrimental to a company’s resources and damaged reputation. But with virtualization, the detrimental factors can be avoided since users can perform the same task simultaneously on multiple devices.

The data stored within the cloud can even be retrieved anytime with any of the devices in use. The server also has two working sides which makes the data accessible at any given point of your time. Even when one server goes down, there will be the secondary server to provide users the access they require to retrieve their data.

5) Flexibility in data transfer

The data transferred to the virtual servers may be retained anytime. Therefore it automates the process of finding data easier since the users won’t be wasting any time to get what they have. With virtualization at the user’s disposal, they can easily locate and transfer specific data to their respective administrator without any security dispute.

The transfer will have no data cap and may sustain long-distance transfers, that too at a nominal charge. Additional storage units in the virtual threshold of servers can reduce the price.

The Technologies to use Virtualization can be done through a wide spectrum of technologies that are Open Source and easily available. Some of the notable technologies that are seen in use or have a pervading influence in the industry are OpenVZ, KVM and XEN.

Virtualization Architecture

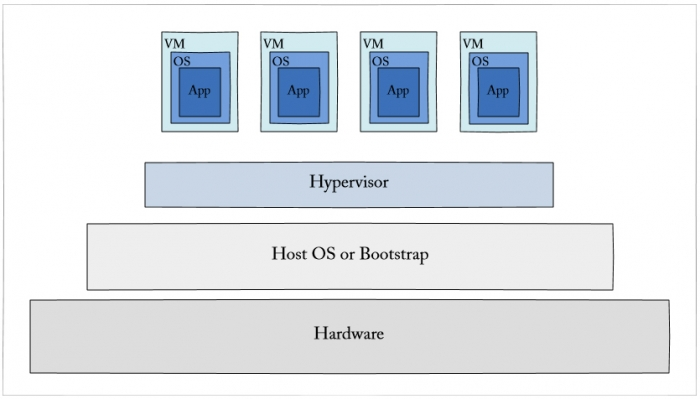

Virtualization is commonly hypervisor-based. The hypervisor isolates operating systems and applications from the underlying computer hardware so the host machine can run multiple virtual machines (VM) as guests that share the system's physical resources, such as processor cycles, memory space, and network bandwidth and many more.

Type 1 hypervisors is called bare-metal hypervisors which run directly on top of the host system hardware. Bare-metal hypervisors offer high availability and resource management. Their direct access to system hardware ensure better performance, scalability and stability. Samples of type 1 hypervisors include Microsoft Hyper-V, Citrix XenServer and VMware ESXi.

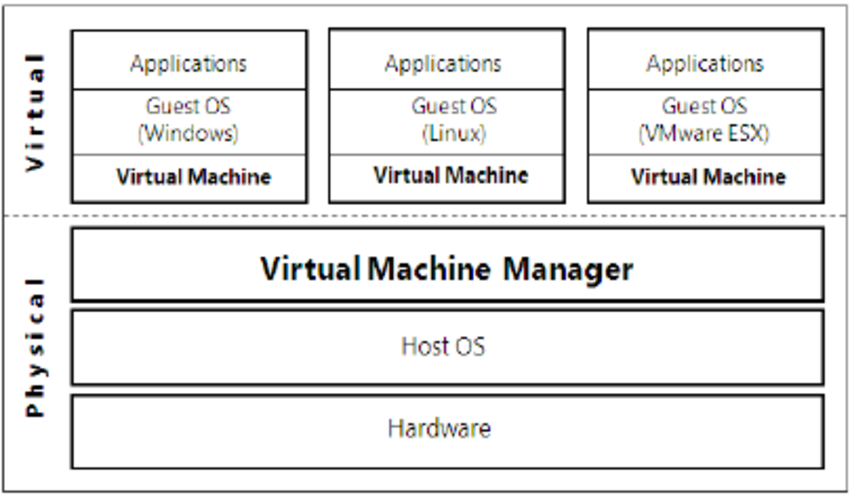

Fig 5.1(I) shows Virtualization architecture

A type 2 hypervisor is known as a hosted hypervisor, is installed on top of the host operating system, rather than sitting directly on top of the hardware as the type 1 hypervisor does. Each guest OS or VM runs above the hypervisor. The convenience of a known host OS can ease system configuration and management tasks. However, a host OS layerlimit performance and expose possible OS security flaws. Examples of type 2 hypervisors contains VMware Workstation, Virtual PC and Oracle VM VirtualBox.

Hypervisor

Hypervisor is a form of virtualization software which is used in Cloud hosting to divide and allocate the resources on various pieces of hardware. The program that provide partitioning, isolation or abstraction is known as virtualization hypervisor. Hypervisor is a hardware virtualization technique which allows multiple guest operating systems to run on a single host system at the same time. A hypervisor is called a virtual machine manager (VMM).

Types of Hypervisor

A.TYPE-1 Hypervisor

Hypervisor runs directly on particular host system. It is known as “Native Hypervisor” or “Bare metal hypervisor”.It doesn’t require any base server operating system. It has direct access to hardware resources. For examples Type 1 hypervisors consist of VMware ESXi, Citrix XenServer and Microsoft Hyper-V hypervisor.

B.TYPE-2 Hypervisor

A Host operating system runs on perticular host system. It is known as ‘Hosted Hypervisor” that means a software installed on an operating system. Hypervisor says operating system to make hardware calls. Example of Type 2 hypervisor contains VMware Player or Parallels Desktop. Hosted hypervisors are found on endpoints like PCs.

Choosing the right hypervisor

Type 1 hypervisors provides better performance than Type 2 because there’s no middle layer which making them the logical choice for mission-critical applications and workloads. But that’s not to say that hosted hypervisors don’t have their place. They are much simpler to set up, so they’re a good bet if, say, you need to deploy a test environment quickly.

One of the best ways to determine which hypervisor meets your requirements is to compare their performance metrics. These include CPU overhead, amount of maximum host and guest memory, and support for virtual processors. The following factors should be study before choosing a suitable hypervisor:

1. Understand your needs

The Company and its applications are the reason for the data center. Besides your company’s needs, you and your co-workers in IT also have your own needs.Needs for a virtualization hypervisor are:

a. Flexibility

b. Scalability

c. Usability

d. Availability

e. Reliability

f. Efficiency

g. Reliable support

2. The cost of a hypervisor

Difficult part of selecting a hypervisor is striking the right balance between cost and functionality for byers. While a number of entry-level solutions are free and the prices at opposite end of the market can be staggering. Licensing frameworks is changeable so it’s important to be aware of exactly what you’re getting for your money.

3. Virtual machine performance

Virtual systems should achieve or exceed the performance of their physical counterparts at least in relation to the applications within each server. Everything beyond meeting this benchmark is profit.

4. Ecosystem

The role of a hypervisor’s ecosystem is that the availability of documentation, support, training, third-party developers and consultancies, for determining whether or not a solution is cost-effective in the long term.

5. Test for yourself

You can learn basic experience from your existing desktop or laptop. You can run both VMware vSphere and Microsoft Hyper-V in VMware Workstation or VMware Fusion to create a nice virtual learning and testing environment.

Hypervisor reference model

There are three models coordinate so as to describing the underlying hardware:

1. Dispatcher

2. Allocator

3. Interpreter

Dispatcher

The dispatcher act because the entry point of the monitor and reroutes the instructions of the virtual machine instance to at list one in other two modules.

Allocator

The allocator is changeable for deciding the system resources provided to the virtual machine instance. It means when virtual machine tries to execute an instruction which ends in changing the machine resources related to the virtual machine and therefore the allocator is invoked by the dispatcher.

Interpreter

The interpreter module consists of interpreter routines. These are executed, when virtual machine executes a privileged instruction.

Virtualization is a computer architecture technology in this multiple virtual machines are multiplexed within the same hardware machine. The aim of virtual machine is to extend resource sharing by many users and improve computer performance in terms of resource utilization and application flexibility.

Hardware resources like CPU, memory, I/O devices, and software resources like operating system and software libraries may be virtualized in various functional layers. This virtualization technology is the used for distributed and cloud computing.

1) Levels of Virtualization Implementation

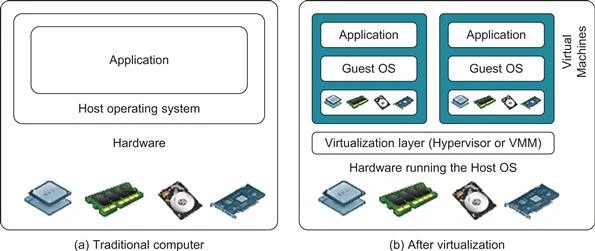

A traditional computer runs with a hostOS treated as tailored for its hardware architecture shown in Figure. After virtualization the different user applications are managed by their own operating systems and run as an identical hardware, independent of the host OS.

This is completed by adding extra software as called a virtualization layer. This virtualization layer is known as hypervisor or virtual machine monitor that is VMM. The VMs are shown in the upper boxes, where applications run with their own guest OS over the virtualized CPU, memory, and I/O resources.

The main function of the software layer is to virtualize the physical hardware of a host machine into virtual resources to be used by the VMs, exclusively this may be implemented at various operational levels.

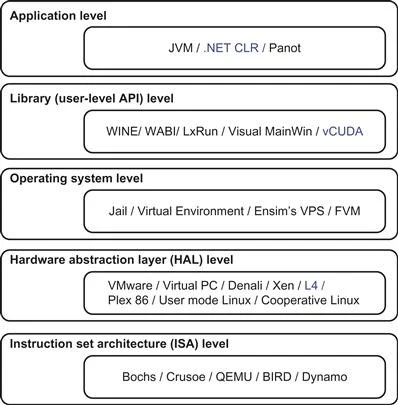

The virtualization software creates the abstraction of VMs by interposing a virtualization layer at various levels of automatic data processing system. Common virtualization layers contains the instruction set architecture that is(ISA) level, hardware level, OS level, library support level, and application level.

Before virtualization aftervisualization

Figure 5.2(I)the architecture of a computer system before and after virtualization

Figure 5.2 (II)shows virtualization which is from hardware to applications in five levels.

A. Instruction Set Architecture Level (ISA)

In this level virtualization is performed by emulating a given ISA by the ISA of the host machine. Consider example in this MIPS binary code is run on an x86-based host machine with the assistance of ISA emulation. It’s possible to run an oversized amount of legacy binary code which is written for various processors on any given new hardware host machine.

Instruction set emulation ends up in virtual ISAs created on any hardware machine. The essential emulation method is through code interpretation. An interpreter program interprets the source instructions to focus on instructions one by one. One source instruction may require multiple native target instructions to perform function. Obviously, this process is comparatively slow. For better performance, dynamic binary translation is desired.

This approach translates basic blocks of dynamic source instructions to focus on instructions. The required blocks extended to program traces or super blocks to extend translation efficiency. Instruction set emulation requires binary translation and optimization. A virtual instruction set architecture (V-ISA) required to add processor specific software translation layer to the compiler.

B. Hardware Abstraction Level

Hardware-level virtualization is performed right top of the bare hardware. This approach generates a virtual hardware environment for a VM. On the opposite hand, the method manages the underlying hardware through virtualization.

The idea is to virtualize a computer’s resources, like its processors, memory, and I/O devices. The goal is to upgrade the hardware utilization rate by multiple users concurrently. The concept was implemented within the IBM VM/370 in the 1960s. Currently the Xen hypervisor has been applied to virtualize x86-based machines to run Linux or other guest OS applications.

C. Operating System Level

This is an abstraction layer between traditional OS and user applications. OS-level virtualization creates isolated containers on a single physical server and also the OS instances to utilize the hardware and software in data centers. The containers behave like real servers. OS-level virtualization is usually utilized in creating virtual hosting environments to allocate hardware resources among outsized number of mutually distrusting users. It’s also used, to a lesser extent, in consolidating server hardware by moving services on separate hosts into containers or VMs on one server.

D. Library Support Level

Most applications use APIs exported by user-level libraries instead of using lengthy system calls by the OS. Most systems provide well-documented APIs, such an interface which becomes another candidate for virtualization.

Virtualization with library interfaces is possible by controlling the communication link between applications and the remaining system through API hooks. The software tool WINE has implemented this approach to support Windows applications on top of UNIX hosts. Another example is the vCUDA which allows applications executing within VMs to leverage GPU hardware acceleration.

E. User-Application Level

Virtualization at the application level virtualizes an application as a VM. On a traditional OS, an application often runs as a process. Therefore, application-level virtualization is also known as Virtual Machines and Virtualization of Clusters and Data Centers process-level virtualization.

The main approach is to deploy high level language (HLL) VMs. In this case the virtualization layer sits as an application program on top of the operating system, and the layer exports an abstraction of a VM that can run programs written and compiled to a particular machine definition.

Any program written in the HLL and compiled for this VM will able to run on it. The Microsoft .NET CLR and Java Virtual Machine (JVM) are examples of this class of VM. The forms of application-level virtualization are known as application isolation, application sandboxing, or application streaming.

The process contains wrapping the application in a layer that is isolated from the host OS and other applications. The result is an application which is much easy to distribute and remove from user workstations. Consider the example is the LANDesk application virtualization platform which deploys software applications as self-contained, executable files in isolated environment without need of installation, system modifications, or elevated security privileges.

Virtualization Design Requirements

The design of virtual system sometimes becomes indistinguishable with OS that have functionality similar to the virtual systems. In such a case, we need to have certain distinction in the design of virtualized systems. The virtualization design requirements can be broadly viewed as follows:

1) Equivalence Requirement

A machine that is developed through virtualization must have a logical equivalence with the real machine. The emulator need to match the capabilities of the physical system in its computational performance. The emulated system must be able to execute all the applications and programs that are designed to execute on the real machines with the only considerable exception of timing.

2) Efficiency Requirement

While taking the route of virtualization, the virtual machine must be as efficient in its performance as a real system. Virtualization is primarily done with a purpose of getting efficient software without the physical hardware. Thus, with the only possibility of compromise on the point of efficiency being the requirement for sharing of resources, an emulator must be capable of interpreting all the instructions that may be safely interpreted in a physical system.

3) Resource control requirements

A computer system is a combination of various resources including processors, memory and I/O devices. All these resources must be managed and controlled effectively by the VMM. The VMM must be in a state of enforcing isolation between the virtualized systems. The virtual machines or the VMM should not face any interference in their operations due to other machines in any manner, barring a case where interference is entitled to the requirements for efficiency.

Virtualization Providers

VMware

The conversation of virtualization for small and medium-sized businesses is starts around VMware. VMware was the company that put office virtualization on everyone’s action item list. The company offers a number of different solutions for different sized businesses with a wide variety of requirements. It’seasy to use and robust security features have secured its reputation as one of the best options for virtualization at SMBs.

Citrix

An average user may not recognize the company name, but it has good shot at previous knowledge of their popular remote access tools, GoToMyPC and GoTo Meeting. Citrix has specifically geared their virtualization software, XenApp, XenDesktop, and VDI-in-a-box toward SMBs and claims that non-IT staff can easily manage and administer the services. They also provide a free trial to prove it.

Microsoft

Although it may be a little more difficult to manage without an in-house or outsourced IT staff, Microsoft’s Hyper-V option is hard to ignore considering its integration with the popular cloud platform Azure. If you just want to minimize the number of vendors in your network, Hyper-V offers everything you required from a virtualization service.

Oracle

This company just keeps getting bigger and bigger. Specializing in marketing software it offers database management, cloud storage and customer relationship management software. If you’re using any of their services already, there could be benefits to enlisting their virtualization services as well. Oracle does everything, server, desktop and app virtualization.

Amazon

Amazon’s EC2 platform hosts scalable virtual private servers. The ability to scale and configure capacity is EC2’s biggest draw for SMBs, who are preparing for the possibility of rapid growth. Therefore any virtualization service is rooted in scalability, Amazon is provides the pack in how quickly and finely you can adjust your solution to your individual needs.

Using VM technology, a new computing mode known as cloud computing is emerging. Cloud computing is transforming the computing landscape by shifting the hardware and staffing costs of managing a computational center to third parties is same as the banks. However, cloud computing has at least two challenges.

The first challenge is the ability to use a variable number of physical machines and VM instances depending on the requirement of a problem. For example, a task need only a single CPU at the time some phases of execution but required hundreds of CPUs at other times. The second challenge is the slow operation of instantiating new VMs.

Currently, new VMs originate either fresh boots or replicates of a template VM which is unaware of the current application state. Therefore, for better support in cloud computing a large amount of research and development are done.

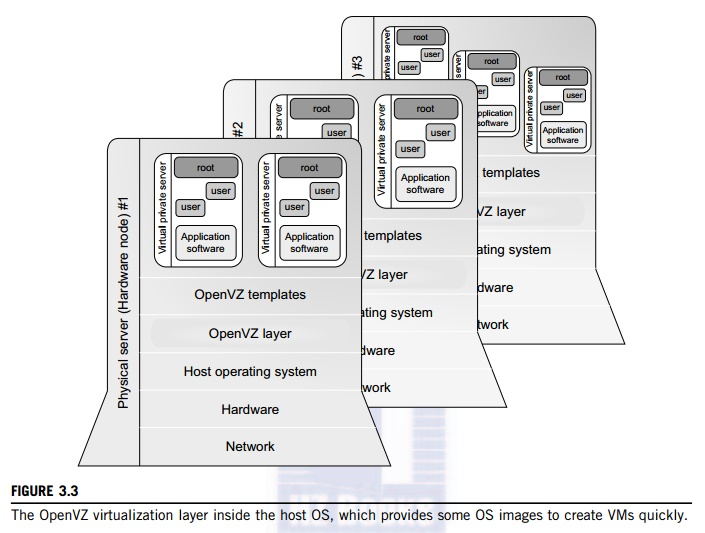

1) OS-Level Virtualization

As mentioned it is slow to initialize a hardware-level VM because each VM creates its own image from scratch. In a cloud computing environment thousands of VMs need to be initialized at the same time. Due to the slow operation, storing the VM images is an issue.

As a matter of fact, there is considerable repeated content among VM images. Full virtualization at the hardware level also has the drawback of slow performance and low density, and the requirement for para-virtualization to modify the guest OS. To minimize the performance overhead of hardware-level virtualization and hardware modification is needed.

OS-level virtualization provides a suitable solution for these hardware-level virtualization issues. Operating system virtualization inserts a virtualization layer inside an operating system to partition a system’s physical resources. It combines multiple isolated VMs within a single operating system kernel.

This kind of VM is called as a virtual execution environment (VE), Virtual Private System (VPS), or in simple container. From the user’s point of view, VE look like real servers. This means a VE has its own set of processes, file system, user accounts, network interfaces with IP addresses, routing tables, firewall rules, and also other personal settings.

VEs can be customized for different people, they share the same operating system kernel. Therefore, OS-level virtualization is also called as single-OS image virtualization. Figure 5.3 (I)illustrates operating system virtualization from the point of view of a machine stack.

2) Advantages of OS Extensions

Compared to hardware-level virtualization, the advantages of OS extensions are two: (1) VMs at the operating system level have low startup/shutdown costs, minimum resource requirements, and high scalability.

(2) For an OS-level VM, it is possible for a VM and its host environment to synchronize state changes when I is necessary.

These benefits can be achieved via two mechanisms of OS-level virtualization:

(1) All OS-level VMs on the same physical machine share a single operating system kernel.

(2) The virtualization layer can be designed in a way which allows processes in VMs to access multiple resources of the host machine as possible, but never to modify them.

In cloud computing, the first and second benefits can be used to overcome the defects of slow initialization of VMs at the hardware level, and being unaware of the current application state, respectively.

Figure 5.3 (I) The Open VZ virtualization layer in the host OS, which provides OS images to create VMs quickly.

3) Disadvantages of OS Extensions

The main drawback of OS extensions is that all the VMs at operating system level on a single container must have the same kind of guest operating system. That is, multipleOS-level virtual machines contains different software distributions andfrom the identical OS family.

In windows distribution Windows XP cannot run on a Linux-based container. The users have various preferences some prefer Windows and others prefer Linux or any other OS. It’s a challenge for OS-level virtualization during this cases.

Figure 5.3(I) illustrates the concept of OS-level virtualization.The virtualization layer is inserted inside the OS to partition the hardware resources for multiple VMs to run their applications in multiple virtual environments. For implementation OS-level virtualization the isolated execution environments created on a single OS kernel. The access requests from a VM required to be redirected to the VM’s local resource partition on the physical machine.

Consider example the ch root command in a very UNIX system create multiple virtual root directories within a host OS. There are two directions to perform virtual root directories that is duplicating common resources to each VM partition or sharing most resources with the host environment and creating private resource copies on the VM on demand.

The first issue is resource costs and overhead on a physical machine. This issue provides the benefits of OS-level virtualization, compared with hardware-assisted virtualization. Therefore, OS-level virtualization is a second choice.

3) Virtualization on Linux or Windows Platforms

Most reported OS-level virtualization systems are Linux-based. Virtualization support on the Windows-based platform is in the research stage. The Linux kernel offers an abstraction layer to allow software processes to work with and operate on resources without knowing the hardware details.

New hardware may require a new Linux kernel to support. Therefore, different Linux platforms use patched kernels to give support for extended functionality. Many Linux platforms don’t seem to behaving a special kernel. In these case, a host can run multiple VMs at the same time on the same hardware.

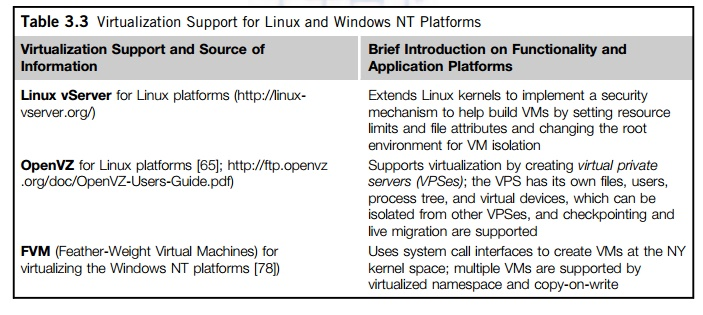

Two OS tools that is Linux vServer and OpenVZ support Linux platforms to run other platform-based applications through virtualization. The third tool FVM developed for virtualization on the Windows NT platform.

Example of Virtualization Support for the Linux Platform

OpenVZ is operating system level tool designed to support Linux platforms to create virtual environments for running VMs under different guest operating systems. It is open source container based virtualization solution created on Linux. For virtualization support and isolation of multiple subsystems, limited resource management, and check pointing it modifies the Linux kernel.

The figure shows the Open VZ system is in 5.3 (I)in which Several VPSes can run at a time on a physical machine. These VPSes look as normal Linux servers. Each VPS has its own files, users and groups, process tree, virtual network, virtual devices, and IPC along with semaphores and messages.

The resource management subsystem of OpenVZ consist of three components that is two-level disk allocation, a two-level CPU scheduler, and a resource controller. The amount of disk space a VM can use is set by the OpenVZ server administrator. This is the first level of disk allocation. Each VM acts as a standard Linux system. Therefore the VM administrator is responsible for allocating disk space for each user and group.

Figure 5.3(II) shows virtualization support for Linux and Windows NT Platforms.

The first-level CPU scheduler of OpenVZ decided that which VM to give the time slice to the virtual CPU priority and limit settings. The second-level CPU scheduler is the same as Linux operating system. OpenVZ is set of 20 parameters which are selected to cover all condition of VM operation.

The resources which VM uses are well controlled. OpenVZ supports check pointing and live migration. The complete state of a VM quickly saved to a disk file. This file is transferred to another physical machine and the VM can be restored there. It only takes some seconds to complete the whole process and there is still a delay in processing because the established network connections are migrated.

Virtualization is achieved through the software known as Virtual Machine Monitor (VMM) or the Hypervisor. The software is used in two ways to forming two different structures of virtualization such as Hosted Virtualization and Bare-Metal Virtualization.

A. Hosted Structure

The hosted virtualization structure support you to run various guest application windows of your own on top of a base OS with the help of the VM, which is also called the Hypervisor. One of the most popular base OS is the x86 OS of windows. The example of hosted virtualization structure includes some extensively used products such as VMware Workstation and Mac Parallels Desktop. Following figure shows hosted virtualization structure.

Figure 5.4 (I) Hosted virtualization structure

B. I/O Access

The virtual OS in this virtualization structure have limited access to I/O devices. You can use only a definite subset of I/O devices with your guest systems while using hosted virtual machines. The I/O connection to a given physical system are owned by the host system only while their emulated view is presented by the VMM to every single guest machine running on the same base system.

Non-generic devices do not update the VMM about themselves therefore it is not possible for the VMM to provide any view of non-generic devices to the virtual machines. Emulation of only generic devices such as Network interface card (NIC) and CD-ROM drivers is possible in this structure.

A pass through facility is also provided in various hosted virtualization solution. This facility enables individual virtual machine to access the USB devices directly from the port. As an example you can acquire data directly from your guest system by accessing an NI USB data acquisition device.

Benefits and Drawbacks

With the possibility of I/O performance improvement by I/O device partitioning between separate virtual system you can also run a real time OS on system with bare metal virtualization structure. The VMM of the bare metal type may be used for binding the interrupt latency and enabling deterministic performance, because the host OS is not relied upon. Hence a single hardware platform can be used to run real time and general purpose OS in parallel with the bare metal virtualization.

Certain drawbacks are also associated with the virtualization structure of the bare-metal type. The hypervisor must include supporting drivers for hardware platforms, apart from including the drivers required for sharing the I/O devices amongst the guest system. Also it is harder to install the VMM in a bare metal structure rather than in the hosted structure because they are not installed on top of a base OS.

There are three classes of VM architecture. Figure shows the architectures of a machine before and after virtualization. Before virtualization, the operating system manages the hardware. After virtualization a virtualization layer is added between the hardware and the operating system.

In this case, the virtualization layer is responsible for converting portions of the real hardware into virtual hardware. Therefore, different operating systems such as Linux and Windows run on the same physical machine at a time.

Depending on the position of the virtualization layer, there are several classes of VM architectures, namely the hypervisor architecture, paravirtualization, and host-based virtualization. The hypervisor is also known as the VMM (VirtualMachine Monitor). They both perform the same virtualization operations.

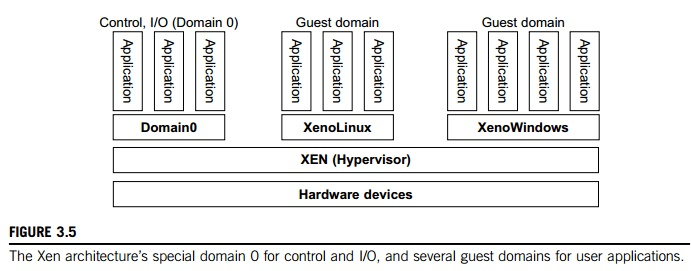

Hypervisor and Xen Architecture

The hypervisor supports hardware-level virtualization on bare metal devices such as CPU, memory, disk and network interfaces. The hypervisor software place directly between the physical hardware and its OS. This virtualization layer is consider as the VMM or the hypervisor.

The hypervisor provides hypercalls for the guest operating systems and applications. Depending on the functionality, a hypervisor consider a micro-kernel architecture as the Microsoft Hyper-V. Or it can assume a monolithic hypervisor architecture same as the VMware ESX for server virtualization.

A micro-kernel hypervisor contains only the basic and unchanging functions such as physical memory management and processor scheduling. The device drivers and other changeable components are outside of the hypervisor. A monolithic hypervisor implements all the functions, including those of the device drivers. Therefore, the size of the hypervisor code of a micro-kernel hypervisor is minimum than a monolithic hypervisor. A hypervisor must be convert physical devices into virtual resources dedicated for the deployed VM to use.

Kernel based virtual machine (KVM) and Xen are two open source technologies that provides virtualization support for the Linux programming system.

KVM provides virtualization support for Operating Systems that are based on x86 hardware coupled with virtualization extensions, Intel VT and AMD-V. KVM constitutes two modules. One is a loadable kernel and the other is specific to the processor. The infrastructure for virtualization is provided by the kernel module in KVM technology, requires a modified Quick EMUlater for the implementation of virtualization. However researchers are trying to find ways so that the required changes are incorporated upstream.

KVM is used to host multiple VMs that run Linux OS images or windows OS images without modification. Each of the VXMs has been provided with its own set of virtualized hardware components that include a network card, disk, graphics adapter etc.

Some of the important features of KVM include the following

1) QEMU Monitor Protocol (QMP)

2) Kernal Samepage Merging (KSM)

3) Kvm Paravirtual Clock

4) CPU Hotplug Support

5) Vmchannel

6) Migration

7) Vhost

8) SCSI Disk Emulation

9) Virtio Devices

10) CPU Clustering

Xen hyper wiser is the only bare-metal hyperwiser available as open source. Through Xen a VM can run a number of OS images or multiple different operating systems in parallel. Various applications, whether open source or commercial, are based on the Xen hypervisor, which provides different virtualization solutions for them. For example the Xen hypervisor provides server virtualization, desktop virtualization, security applications, IaaS and embedded and hardware appliances. The Xen hyperwisor is the most widely used virtualization technique in the production environment at present.

The key features of the Xen hypervisor include the following

1) Robustness and security

The technique follows the microkernel design approach offering a higher level of robustness and security to the applications than other hypervisor.

2)Scope for other OS

Not only can the Xen hypervisor be run on the Linux OS working as the main control stack but it can also be adjusted to other systems as well.

3) Isolation of Drivers from the Rest of the System.

The main device drivers can be allowed by the Xen hypervisor to run inside a VM, and in case the driver suffers a crash or is compromised, it can be restarted by rebooting the VM that contains the driver without causing any effect on the other parts of the system.

4) Support for Paravirtualization

The Xen hypervisor provides optimization support for paravirtualized guests so that they can be run as VMs. The feature helps guests run faster than the hypervisors providing the hardware extension. Hardware which haven’t any support for virtualization extension is additionally used with the Xen hypervisor.

Xen is open source hypervisor program which is developed by University. Xen could be a microkernel hypervisor and differentiate the policy from the mechanism. The Xen hypervisor implements all the mechanisms.

Xen doesn’t include any device drivers. It provides a way which a guest OS can have direct access to the physical devices. Xen provides a virtual environment located between the hardware and therefore the OS.

A number of vendors are within the process of developing commercial Xen hypervisors, among them are Citrix XenServer and Oracle VM. The most components of a Xen system are the hypervisor, kernel, and applications. The organization of the three components is vital. In virtualization systems, many guest operating systems may run on top of the hypervisor. Therefore not all guest OSes are created equal, and one controls the others.

The guest OS has control ability which is termed as Domain 0, and also the others are called Domain U. Domain 0 could be a privileged guest OS of Xen. It is first loaded when Xen boots with none of the file system drivers being available. Domain 0 is meant to access hardware directly and manage devices. Therefore, one amongst the responsibilities of Domain 0 is to allocate and map hardware resources for the guest domains.

Figure5.7(I)The Xen architecture’s special domain 0 for control and I/O, and several guest domains for user applications.

Xen is relies on Linux and its security level is C2 and management virtual machine is called as Domain 0 it has the privilege to manage other VMs implemented on the identical host. If Domain0 is compromised, the hacker can control the complete system. So, within the VM system, security policies are required to boost the protection of Domain 0.

Domain 0, work as a VMM, allows users to make, copy, save, read, modify, share, migrate, and roll back VMs easily as manipulating a file, which provides extraordinary benefits for users. But it also brings a series of security problems during the software life cycle and data lifetime.

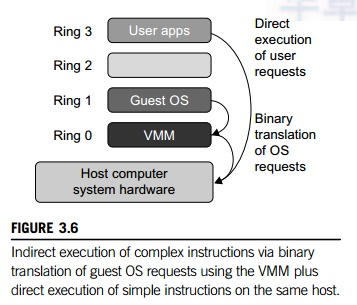

Based on implementation technologies, hardware virtualization divided into two categories such as full virtualization and host-based virtualization. Full virtualization doesn’t required to change the host OS. It is based on binary translation to trap and to virtualize the execution of certain sensitive, non-virtualizable instructions.

The guest operating systems and their applications contains noncritical and important critical instructions. In a very host-based system, both a host OS and a guest OS are used. A virtualization software layer is constructed between the host OS and guest OS.

A. Full Virtualization

With the assistance of full virtualization, noncritical instructions run on the hardware directly while critical instructions are discovered and replaced with traps into the VMM to be emulated by software. Both the hypervisor and VMM approaches are considered full virtualization.

The critical instructions trapped into the VMM because binary translation can incur an oversized performance overhead. Noncritical instructions don’t control hardware or the security of the system, but critical instructions do. Therefore, running noncritical instructions on hardware not only boost efficiency, but also provide system security.

B. Binary Translation of Guest OS Requests Using a VMM

This method is implemented by VMware and many other software companies. As shown in Figure VMware adds the VMM at Ring 0 and also the guest OS at Ring 1. The VMM scans the instruction stream and identifies the privileged, control- and behavior-sensitive instructions.

When these instructions are identified and trapped into the VMM. The tactic employed in this emulation is termed as binary translation. Therefore, full virtualization combines binary translation and direct execution. The guest OS is totally decoupled from the underlying hardware and also the guest OS is unaware that it’s being virtualized.

The performance of full virtualization might not be ideal, because it contains binary translation which is time-consuming. The full virtualization of I/O-intensive applications is could be a really a giant challenge. Binary translation helps a code cache to store translated hot instructions to enhance performance. It increases the price of memory usage. At the time of this the performance of full virtualization on the x86 architecture is usually more than 80 percent that of the host machine.

C. Host-Based Virtualization

VM architecture is to put in a virtualization layer on top of the host OS. This host OS is liable for managing the hardware. The guest operating systems are installed and run on top of the virtualization layer. Dedicated applications can even run on the VMs. Some other applications can also run with the host OS directly.

Figure 5.8 (I)Indirect execution of complex instructions through binary translation of guest OS requests using the VMM plus direct execution of simple instructions on the identical host.

This host based architecture has distinct advantages. First, the user install this VM architecture without changing the host OS. The virtualizing software can depends upon the host OS to supply device drivers and other low-level services. This can simplify the VM design and deployment. Second, the host-based approach appeals to several host machine configurations. Compared with the hypervisor architecture, the performance of the host-based architecture is extremely low.

When application requests hardware access, it contains four layers of mapping and degrades performance significantly. When theISA of a guest OS is different from the ISA of the underlying hardware the binary translation must be adopted. Although the host-based architecture has flexibility, the performance is extremely low to be useful in practice.

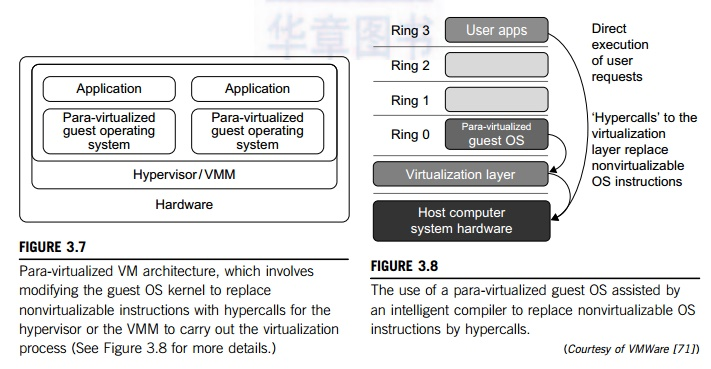

Para-virtualization need to change the guest operating systems. A para-virtualized VM provides special APIs requiring substantial OS modifications in user applications. Performance degradation may be a critical issue of a virtualized system. Nobody wants to use a VM if it’s slower than employing a physical machine.

The virtualization layer is inserted at different positions in a machine software stack. Therefore, para-virtualization attempts to scale a back the virtualization overhead, and improve performance by modifying only the guest OS kernel.

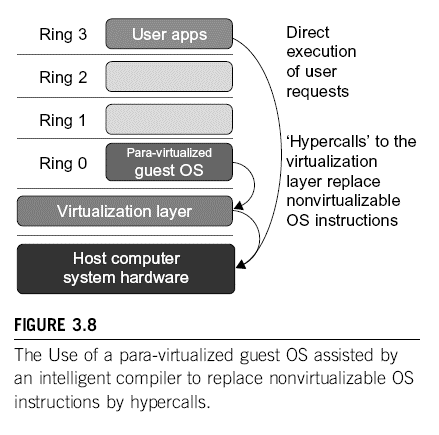

Figure 5.9 (I) illustrates the concept of a para-virtualized VM architecture. The guest operating systems are para-virtualized. They’re managed by an intelligent compiler to exchange the non-virtualizable OS instructions by hyper calls. The standard x86 processor offers four process rings that’s Rings 0, 1, 2, and 3.

The lower the ring number then the higher the privilege of instruction being executed. The OS used for managing the hardware and therefore the privileged instructions to execute at Ring 0 and the user-level applications run at Ring 3. The simplestexample of para-virtualization is that the KVM.

A. Para-Virtualization Architecture

When the x86 processor is virtualized, a virtualization layer is inserted between the hardware and therefore the OS. Consistent with the x86 ring definition, the virtualization layer installed at Ring 0. Different instructions at Ring 0 may accountable for some problems.

Para-virtualization exchange non virtualizable instructions with respect to hypercalls. These instructions communicate directly with the hypervisor or VMM. However, when the guest OS kernel is modified for virtualization, it cannot run on the hardware directly.

Figure 5.9 (I)Para-virtualized VM architecture take a part in modifying the guest OS kernel to exchange nonvirtualizable instructions with hypercalls for the hypervisor or the VMM to complete the virtualization process.

Figure 5.9 (II)shows the use of a para-virtualized guest OS assisted by intelligent compiler to replacement of non virtualizable OS instructions with hypercalls.

Para-virtualization reduces the overhead, it finds other problems.

1) Its compatibility and portability in unsure, because it support the unmodified OS similarly.

2) The cost of maintaining para-virtualized operating system is very high, because it require deep OS kernel modifications.

Finally, the performance advantage of para-virtualization changeable because of workload variations. Compared with full virtualization, para-virtualization is easy and more practical. The problem in full virtualization is its low performance in binary translation. To speed up binary translation is difficult. Therefore, many virtualization products employ the para-virtualization architecture. The popular virtualization architectures re Xen, KVM, and VMware ESX are good examples.

B.KVM (Kernel-Based VM)

This is a Linux para-virtualization system which is a part of the Linux version 2.6.20 kernel. Memory management and scheduling activities are performed by the existing Linux kernel. The KVM makes it simpler than the hypervisor that controls the entire machine.

KVM is a hardware assisted para virtualization tool it boost performance and supports unmodified guest OSs such as Windows, Linux, Solaris, and other UNIX operating systems.

C. Para-Virtualization with Compiler Support

Same as the full virtualization architecture which intercepts and emulates privileged and sensitive instructions at runtime, para-virtualization is also handles these instructions at compile time. The guest OS kernel is changed to replace the privileged and sensitive instructions with hypercalls to the hypervisor or VMM.

The guest OS running in a guest domain which is run at Ring 1 instead of Ring 0. This shows that the guest OS not able to execute privileged and sensitive instructions. The instructions with restrictions are implemented by hypercalls to the hypervisor.

After replacing the instructions with hyper calls, the changed guest OS match the behavior of the original guest OS. In UNIX system system call involves an interrupt or service routine. The hypercalls apply a dedicated service routine in Xen.

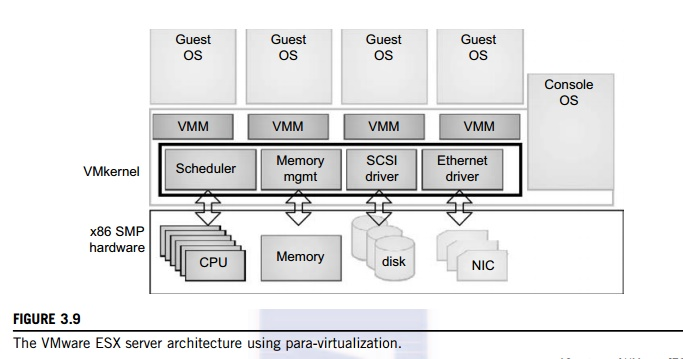

Example of VMware ESX Server for Para-Virtualization

VMware pioneered the software market for virtualization. The company has developed virtualization tools for desktop systems and servers and also virtual infrastructure for large data centers. ESX is a VMM or a hypervisor for bare-metal x86 symmetric multiprocessing (SMP) servers. It accesses hardware resources such as I/O resources directly and Complete resource management control.

An ESX enabled server has4 components that is a virtualization layer, a resource manager, hardware interface components, and a service console. To increase performance, the ESX server employs a paravirtualization architecture in this the VM kernel interacts directly with the hardware without containing the host OS.

The VMM layer virtualizes the physical hardware resources like CPU, memory, network and disk controllers, and also human interface devices. Each and every VM has its own set of virtual hardware resources. The resource manager is responsible to allocates CPU, memory disk, and network bandwidth and maps them to the virtual hardware resource set of each VM created. Hardware interface components are the device drivers and therefore the VMware ESX Server File System. The service console is responsible for booting the system, initiating the execution of the VMM layer and resource manager. It also facilitates the method for system administrators.

Figure 5.9(III)The VMware ESX server architecture using para-virtualization.

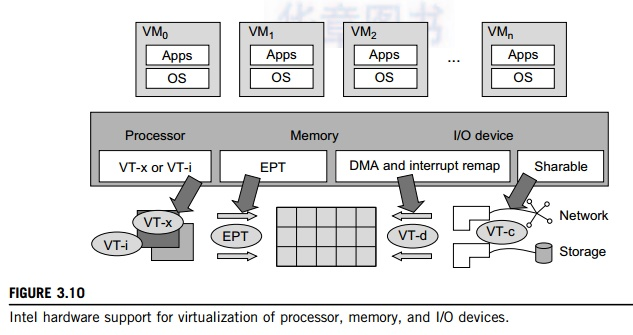

To support virtualization, processors like the x86 employ a special running mode and direction is known as hardware-assisted virtualization. The VMM and guest OS run in different modes and all sensitive instructions of the guest OS and its applications are trapped in the VMM.

To save processor states, mode switching is completed by hardware. For the x86 architecture, Intel andAMD have proprietary technologies for hardware-assisted virtualization.

A. Hardware Support for Virtualization

Modern operating systems and processors allow multiple processes to run at same time. If there is no protection mechanism in a processor then all instructions from different processes will access the hardware directly and system crash occurs.

Therefore, all processors have minimum two modes, user mode and supervisor mode, to confirm controlled access of critical hardware. Instructions running in supervisor mode are known as privileged instructions. Other instructions are unprivileged instructions.

In a virtualized environment, it is difficult to form Operating systems and applications run correctly because there are more layers within the machine stack.

At the time of this writing, many hardware virtualization products were available. The VMware workstation could be a VM software for x86 and x86-64 computers. This software package give access to users for founded multiple x86 and x86-64 virtual computers and use these VMs same time with the host operating system. The VMware Workstation consider the host-based virtualization.

Xen is a hypervisor to be used in IA-32, x86-64, Itanium, and PowerPC 970 hosts. Xen modifies Linux because the lowest and most privileged layer, or a hypervisor. One or more guest OS run on top of the hypervisor. KVM that is Kernel-based Virtual Machine may be a Linux kernel virtualization infrastructure.

KVM support hardware-assisted virtualization and para virtualization by using the Intel VT-x or AMD-v and VirtIO framework. TheVirtIO framework includes a paravirtual Ethernet card, a disk I/O controller, a balloon device for adjusting guest memory usage, and a VGA graphics interface using VMware drivers.

Example shows the Hardware Support for Virtualization in the Intel x86 Processor

Software-based virtualization method is complicated and provides performance overhead, Intel provides a hardware assist technique to perform virtualization easily and improve performance.

Figure 5.10 (I) provides an overview of Intel’s full virtualization techniques. For processor virtualization, Intel provides the VT-x or VT-i technique. VT-x adds a privileged mode and some instructions to processors. This enhancement caught all sensitive instructions in the VMM automatically.

For memory virtualization, Intel offers the EPT it translates the virtual address to the machines physical addresses to improve performance. For I/O virtualization, Intel implements VT-d and VT-c to support this.

Figure 5.10 (I)Intel hardware support for virtualization of processor, memory, and I/O devices.

B. CPU Virtualization

A VM is a duplicate of an existing computer system in which a many VM instructions are executed on the host processor in native mode. Therefore the unprivileged instructions of VMs run directly on the host machine for higher efficiency. Other critical instructions are handled carefully for correctness and stability. The critical instructions are divided into three categories such as privileged instructions, control sensitive instructions, and behavior-sensitive instructions.

Privileged instructions are execute in a privileged mode and will be trapped if executed outside this mode. Control-sensitive instructions is used to change the configuration of resources used. Behavior-sensitive instructions have different behaviors depending on the configuration of resources which includes the load and store operations over the virtual memory.

A CPU is virtualizable if it supports the ability to run the VM’s privileged and unprivileged instructions in the CPU’s user mode while the VMM runs in supervisor mode. When the privileged instructions congaing control and behavior sensitive instructions of a VM are executed and also they are trapped in the VMM.

In this case, the VMM acts as a mediator for hardware access from different VMs to guarantee the correctness and stability of the whole system. Therefore not all CPU architectures are virtualizable. RISC CPU architectures naturally virtualized because all control and behavior sensitive instructions are the privileged instructions.

The x86 CPU architectures are not primarily designed to support virtualization. This is because 10 sensitive instructions SGDT and SMSW are not privileged instructions. When these instructions execute in virtualization, they cannot be trapped in the VMM.

On a native UNIX-like system, a system call triggers the 80h interrupt and passes control to the OS kernel. The interrupt handler in the kernel is then invoked to process the system call.

At the same time, the 82h interrupt in the hypervisor is triggered and control is passed on to the hypervisor as well. When the hypervisor completes its task for the guest OS system call, it passes control back to the guest OS kernel. Certainly, the guest OS kernel also invoke the hypercall while it’s running. Although paravirtualization of a CPU is unmodified applications run in the VM, it causes a small performance penalty.

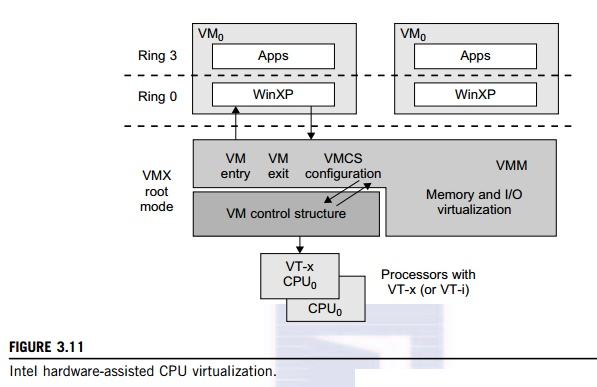

1) Hardware-Assisted CPU Virtualization

This technique simplify virtualization because full or paravirtualization is complicated. Intel and AMD add an additional mode called as privilege mode level to x86 processors. Therefore, operating systems still runng at Ring 0 and the hypervisor can runing at Ring -1. All the privileged and sensitive instructions are trapped in the hypervisor automatically. This technique removes the difficulty of performing binary translation of full virtualization. It also run in VMs without modification.

Example shows Intel Hardware-Assisted CPU Virtualization

Although x86 processors are not virtualizable primarily therefore great effort is taken to virtualize them. They are used in comparing RISC processors that the bulk of x86-based legacy systems cannot discard easily. Intel’s VT-x technology is an example of hardware-assisted virtualization, as shown in Figure.

Intel calls the privilege level of x86 processors in the VMX Root Mode. In order to manage the start and stop of a VM and allocate a memory page to keep up the CPU state for VMs the set of additional instructions is added. At the time of this writing, Xen, VMware, and therefore the Microsoft Virtual PC all implement their hypervisors by using the VT-x technology.

Figure 5.10 (II)Intel hardware-assisted CPU virtualization.

Generally, hardware-assisted virtualization have high efficiency. Therefore the transition from the hypervisor to the guest OS incurs high overhead switches between processor modes and sometimes itcannot perform binary translation.

Hence, virtualization systems like VMware now use a hybrid approach, in which some tasks are offloaded to the hardware but the remaining is done in software. Additionally, para-virtualization and hardware-assisted virtualization is combined to improve the performance.

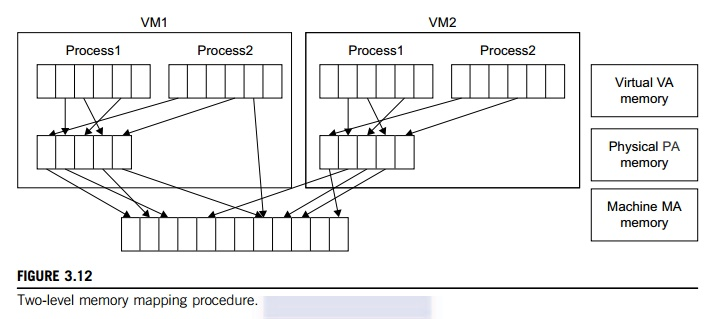

Virtual memory virtualization is analogous to the virtual storage support provided by modern operating systems. In a traditional execution environment, the operating system maintains mappings of virtual memory to machine memory using page tables, which could be a one-stage mapping from virtual memory to machine memory.

All modern x86 CPUs include a memory management unit (MMU)and a translation look aside buffer (TLB) to get virtual memory performance. However, in a virtual execution environment virtualization involves sharing the physical system memory in RAM and dynamically allocating it to the physical memory of the VMs.

That means a two-stage mapping process should be maintained by the guest OS and the VMM with respect to virtual memory to physical memory and physical memory to machine memory. Therefore MMU virtualization should be supported, which is transparent to the guest OS.

The guest OS continues to regulate the mapping of virtual addresses to the physical memory addresses of VMs. But the guest OS cannot directly access the particular machine memory. The VMM is responsible for mapping the guest physical memory to the actual machine memory. Figure shows the two-level memory mapping procedure.

Figure 5.11 (I)Two-level memory mapping procedure.

Since each page table of the guest OS incorporates separate page table in the VMM corresponding to it, the VMM page table is named the shadow page table. Nested page tables add another layer of indirection to virtual storage. The MMU already handles virtual-to-physical translations as defined by the OS.

Then the physical memory addresses are translated to machine addresses using another set of page tables defined by the hypervisor. Since modern operating systems maintain a collection of page tables for each process, the shadow page tables will get flooded. Consequently, the performance overhead and value of memory will be very high.

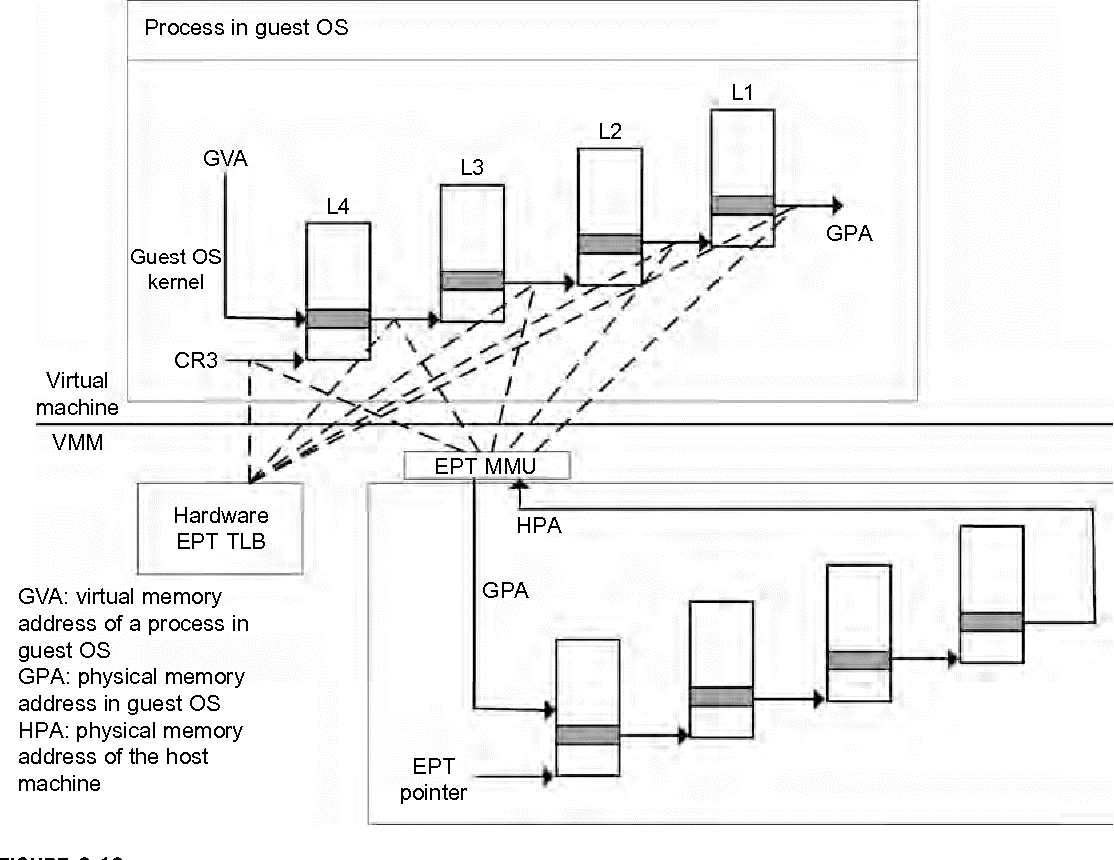

Example shows Extended Page Table by Intel for Memory Virtualization

The efficiency of the software shadow page table technique is not effective, Intel developed a hardware based EPT technique to improve it, as shown in Figure. Additionally Intel offers a Virtual ProcessorID (VPID) to improve use of the TLB. Therefore, the performance of memory virtualization is more improved. In Figure the page tables of the guest OS and EPT are all four-level.

Figure 5.11 (II)Memory virtualization using EPT by Intel (the EPT is also known as the shadow page table).

When a virtual address want to be translated, the CPU will first look for the L4 page table pointed to byGuest CR3. Since the address in Guest CR3 is a physical address in the guest OS and the CPU required to convert the Guest CR3 GPA to the host physical address using EPT.

In this the CPU will check theEPT TLB to see if the translation is present there. If there is no required translation in the EPT TLB, the CPU will look for it in the EPT. If the CPU can’t find the translation in the EPT, an EPT violation exception will raised.

When the GPA of the L4 page table is obtained, the CPU will calculate the GPA of the L3 page table by using the GVA and the content of the L4 page table. If the entry corresponding to the GVA in the L4 page table is a page fault, the CPU will generate a page fault interrupt and will let the guest OS kernel handle the interrupt.

When the PGA of the L3 page table is obtained, the CPU will look for the EPT to get the HPA of the L3 page table. To get the HPA the CPU needs to look for the EPT five times, and each time, the memory needs to be accessed four times. Therefore, there are 20 memory accesses in the worst case, which is again very slow. To overcome this shortcoming, Intel increased the size of the EPT TLB to decrease the number of memory accesses.

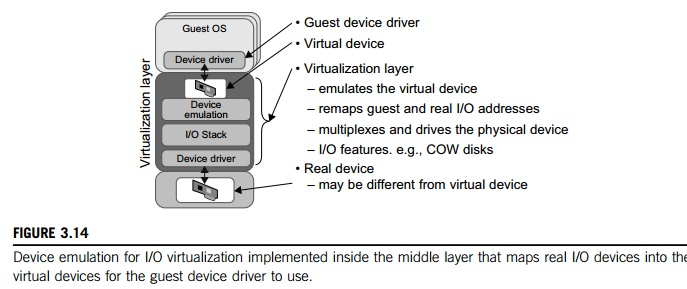

I/O Virtualization

I/O virtualization is to managing the routing of I/O requests between virtual devices and the shared physical hardware. There are three ways to implement I/O virtualization such as full device emulation, para-virtualization, and direct I/O. Full device emulation is the first approach for I/O virtualization. Generally, this approach emulates well-known for real-world devices.

Figure 5.11 (III)Device emulation for I/O virtualization implemented inside the middle layer that maps real I/O devices into the virtual devices for the guest device driver to use.

All the functions of a device or bus infrastructure like device enumeration, identification, interrupts, and DMA, are replicated in software. This software is located in the VMM and work as a virtual device. The I/O access requests of the guest OS are trapped in the VMM which interacts with the I/O devices. The full device emulation approach is shown in Figure 5.11 (III).

A single hardware device is shared by multiple VMs that run one by one concurrently. Therefore software emulation runs very slow than the hardware it emulates. The para-virtualization method ofI/O virtualization is used in Xen. It is also known as the split driver model containing a frontend driver and a backend driver. The frontend driver is running in Domain U and the backend driver is running in Domain 0. They interact with each other through a block of shared memory.

The frontend driver manages the I/O requests of the guest OSes and the backend driver is responsible for managing the real I/O devices and multiplexing the I/O data of different VMs. The para I/O virtualization achieves better device performance compare with full device emulation, it comes with a higher CPU overhead.

Direct I/O virtualization provides the VM access devices directly. It can achieve close-to-native performance without high CPU costs. Therefore current direct I/O virtualization implementations focus on networking for mainframes. There are a many challenges for commodity hardware devices.

For example, when a physical device is reclaimed needed by workload migration for later reassignment, it may set to an arbitrary state for example DMA to some arbitrary memory locations that can function incorrectly or may crash the whole system. Since software-based I/O virtualization needs a very high overhead of device emulation, hardware-assisted I/O virtualization is critical.

Intel VT-d supports the remapping of I/O DMA transfers and device-generated interrupts. The architecture of VT-d provides the flexibility to support multiple usage models that run as unmodified, special-purpose, or “virtualization-aware” guest OSes.

Example shows VMware Workstation for I/O Virtualization

The VMware Workstation runs as application. It increase the I/O device support in guest OSes, host OSes, and VMM to implement I/O virtualization. The application portion (VMApp) uses a driver loaded into the host operating system (VMDriver) to establish the privileged VMM, which runs on the hardware.

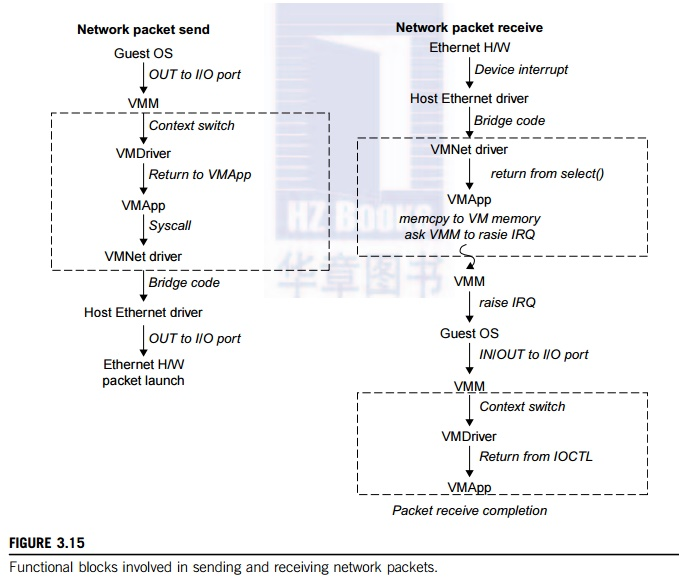

A given physical processor is executed in either the host world or the VMM world, with the VMDriver facilitating the transfer of control between the two worlds. The VMware Workstation employs full device emulation to implement I/O virtualization. Figure shows the functional blocks used in sending and receiving packets via the emulated virtual NIC.

Figure 5.11 (IV)Functional blocks involved in sending and receiving network packets.

The virtual NIC models is an AMD Lance Am79C970A controller. The device driver for a Lance controller in the guest OS initiates packet transmissions by reading and writing a sequence of virtual I/O ports; each read or write switches return to the VMApp to emulate the Lance port accesses.

When the last OUT instruction of the sequence is encountered, the Lance emulator calls a normal write() to the VMNet driver. The VMNet driver passes the packet onto the network through a host NIC and then the VMApp switches back to the VMM. The switch comes with a virtual interrupt to notify the guest device driver that the packet was sent. Packet receives occur in reverse.

Reference Book

1. Cloud Computing Black Book- Jayaswal, Kallakurchi, Houde, Shah, Dreamtech Press.

2. Cloud Computing: Principles and Paradigms – Buyya, Broburg, Goscinski.

Reference Links

1. Https://booksite.elsevier.com/samplechapters/9780123858801/Chapter_3.pdf

2. Https://www.mygreatlearning.com/blog/virtualization-in-cloud-computing/