Unit - 3

Context Free Grammar

Context-free grammar (CFG) is a collection of rules (or productions) for recursive rewriting used to produce string patterns.

It consists of:

● a set of terminal symbols,

● a set of non terminal symbols,

● a set of productions,

● a start symbol.

Context-free grammar G can be defined as:

G = (V, T, P, S)

Where

G - grammar, used to generate string,

V - final set of non terminal symbols, which are denoted by capital letters,

T - final set of terminal symbols, which are denoted by small letters,

P - set of production rules, it is used for replacing the non terminal symbol of a string on both sides (left or right)

S - start symbol, which is used to derive the string

A CFG for Arithmetic Expressions -

An example grammar that generates strings representing arithmetic expressions with the four operators +, -, *, /, and numbers as operands is:

1. <expression> --> number

2. <expression> --> (<expression>)

3.<expression> --> <expression> +<expression>

4. <expression> --> <expression> - <expression>

5. <expression> --> <expression> * <expression>

6. <expression> --> <expression> / <expression>

The only non terminal symbol in this grammar is <expression>, which is also the start symbol. The terminal symbols are {+,-,*,/ , (,),number}

● The first rule states that an <expression> can be rewritten as a number.

● The second rule says that an <expression> enclosed in parentheses is also an <expression>

● The remaining rules say that the sum, difference, product, or division of two <expression> is also an expression.

Example 1:

Construct a CFG for a language with any number of a's in the set ∑= {a}.

Solution:

The regular expression for the above language is, as we know:

r.e. = a*

The following is the Regular expression's production rule:

S → aS rule 1

S → ε rule 2

We may now start with start symbols to derive the string “aaaaaa” :

S

AS

AaS rule 1

AaaS rule 1

AaaaS rule 1

AaaaaS rule 1

AaaaaaS rule 1

Aaaaaaε rule 2

Aaaaaa

The r.e. = a* can generate a set of string {ε, a, aa, aaa,.....}. We can have a null string because S is a start symbol and rule 2 gives S → ε.

Key takeaway

Context-free grammar (CFG) is a collection of rules (or productions) for recursive rewriting used to produce string patterns.

A parse tree is an entity which represents the structure of the derivation of a terminal string from some non-terminal.

● The graphical representation of symbols is the Parse tree. Terminal or non-terminal may be the symbol.

● In parsing, using the start symbol, the string is derived. The starter symbol is the root of the parse tree.

● It is the symbol's graphical representation, which can be terminals or non-terminals.

● The parse tree follows operators' precedence. The deepest sub-tree went through first. So, the operator has less precedence over the operator in the sub-tree in the parent node.

Key features to define are the root ∈ V and yield ∈ Σ * of each tree.

● For each σ ∈ Σ, there is a tree with root σ and no children; its yield is σ

● For each rule A → ε, there is a tree with root A and one child ε; its yield is ε

● If t 1 , t 2 , ..., t n are parse trees with roots r 1 , r 2 , ..., r n and respective yields y 1 , y 2 ,..., y n , and A → r 1 r 2 ...r n is a production, then there is a parse tree with root A whose children are t 1 , t 2 , ..., t n . Its root is A and its yield is the concatenation of yields: y 1 y 2 ...y n

Here, parse trees are constructed from bottom up, not top down.

The actual construction of “adding children” should be made more precise, but we intuitively know what’s going on.

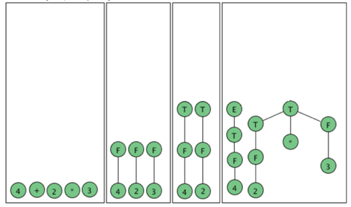

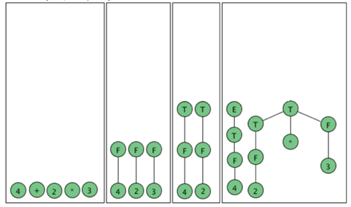

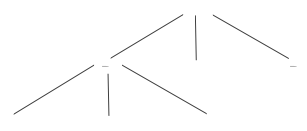

As an example, here are all the parse (sub) trees used to build the parse tree for the arithmetic expression 4 + 2 * 3 using the expression grammar

E → E + T | E - T | T

T → T * F | F

F → a | ( E )

Where a represents an operand of some type, be it a number or variable. The trees are grouped by height.

Fig 1: Example of parse tree

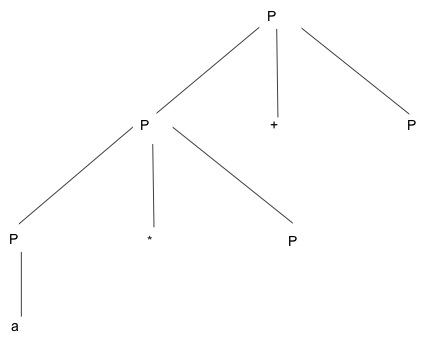

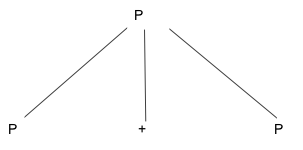

Example:

Production rule:

- P = P + P

- P = P * P

- P = a | b | c

Input:

a * b + c

Step 1 -

Step 2 -

Step 3 -

Step 4 -

Step 5 -

Key takeaway:

In parsing, using the start symbol, the string is derived. The starter symbol is the root of the parse tree.

The graphical representation of symbols is the Parse tree. Terminal or non-terminal may be the symbol.

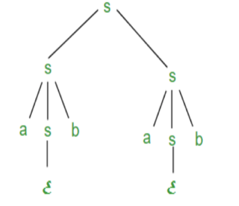

Suppose we have a context free grammar G with production rules:

S->aSb|bSa|SS|ɛ

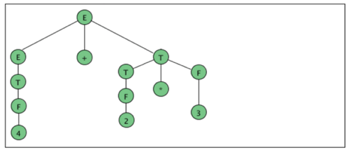

Left most derivation (LMD) and Derivation Tree:

Leftmost derivation of a string from staring symbol S is done by replacing leftmost

Non-terminal symbol by RHS of corresponding production rule.

For example:

The leftmost derivation of string abab from grammar G above is done as:

S =>aSb =>abSab =>abab

The symbols in bold are replaced using production rules.

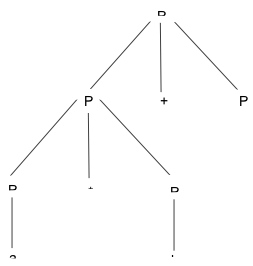

Example:

● Produle rule -

P = P + P

P = P - P

P = a | b

● Input -

a - b + a

● Leftmost derivation is:

P = P + P

P = P - P + P

P = a - P + P

P = a - b + P

P = a - b + a

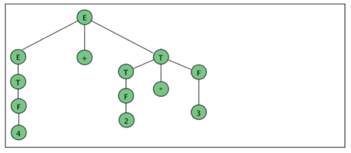

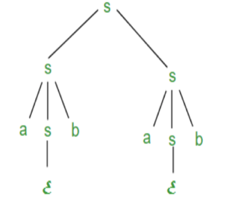

Derivation tree: It explains how string is derived using production rules from S and is shown in Figure.

Fig 2: Derivation tree

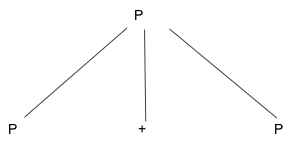

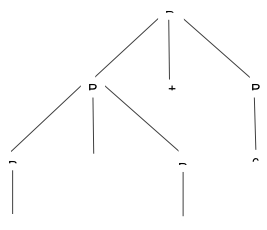

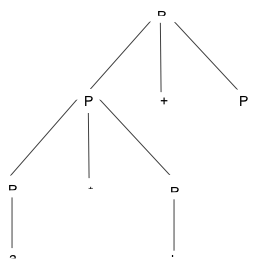

Right most derivation (RMD):

It is done by replacing rightmost non-terminal symbol S by RHS of corresponding production rule.

For Example: The rightmost derivation of string abab from grammar G above

Is done as:

S =>SS =>SaSb =>Sab =>aSbab =>abab

The symbols in bold are replaced using production rules.

The derivation tree for abab using rightmost derivation is shown in Figure.

Fig 3: Right most derivation

A derivation can be either LMD or RMD or both or none.

For Example:

S =>aSb =>abSab =>abab is LMD as well as RMD But

S => SS =>SaSb =>Sab =>aSbab =>abab is RMD but not LMD.

Example:

● Produle rule -

P = P + P

P = P - P

P = a | b

● Input -

a - b + a

● Rightmost Derivation is -

P = P - P

P = P - P + P

P = P - P + a

P = P - b + a

P = a - b + a

● We can receive the same string when we use the leftmost derivation or rightmost derivation.

Parse Trees and Derivations

A derivation is a sequence of strings in V * which starts with a non-terminal in V-Σ and ends with a string in Σ * .

Let’s consider the sample grammar

E → E+E | a

We write:

E ⇒ E+E ⇒ E+E+E ⇒a+E+E⇒a+a+E⇒a+a+a

But this is incomplete, because it doesn’t tell us where the replacement rules are applied.

We actually need "marked" strings which indicate which non-terminal is replaced in all but the first and last step:

E ⇒ Ě+E ⇒ Ě+E+E ⇒a+Ě+E ⇒a+a+Ě ⇒a+a+a

In this case, the marking is only necessary in the second step; however it is crucial, because we want to distinguish between this derivation and the following one:

E ⇒ E+Ě ⇒ Ě+E+E ⇒a+Ě+E ⇒a+a+Ě ⇒a+a+a

We want to characterize two derivations as “coming from the same parse tree.”

The first step is to define the relation among derivations as being “more left-oriented at one step”. Assume we have two equal length derivations of length n > 2:

D: x 1 ⇒ x 2 ⇒ ... ⇒x n

D′: x 1 ′ ⇒ x 2 ′ ⇒ ... ⇒x n ′

Where x 1 = x 1 ′ is a non-terminal and

x n = x n ′ ∈ Σ *

Namely they start with the same non-terminal and end at the same terminal string and have at least two intermediate steps.

Let’s say D < D′ if the two derivations differ in only one step in which there are 2 non-terminals, A and B, such that D replaces the left one before the right one and D′ does the opposite. Formally:

D < D′ if there exists k, 1 < k < n such that

x i = x i ′ for all i ≠ k (equal strings, same marked position)

x k-1 = uǍvBw, for u, v, w ∈ V*

x k-1 ′ = uAvB̌w, for u, v, w ∈ V*

x k =uyvB̌w, for production A → y

x k ′ = uǍvzw, for production B → z

x k+1 = x k+1 ′ = uyvzw (marking not shown)

Two derivations are said to be similar if they belong to the reflexive, symmetric, transitive closure of <.

● A context free grammar is called ambiguous if there exists more than one LMD or RMD for a string which is generated by grammar.

● There will also be more than one derivation tree for a string in ambiguous grammar.

● The grammar described above is ambiguous because there are two derivation Trees.

● There can be more than one RMD for string abab which are:

S =>SS =>SaSb =>Sab =>aSbab =>abab

S =>aSb =>abSab =>abab

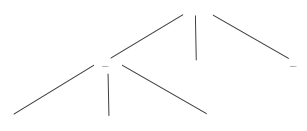

As we have included, context-free grammar can effectively represent different languages. Not all grammar is always optimized, which means that there might be some extra symbols in the grammar (non-terminal). Unnecessary change in the length of grammar with additional symbols.

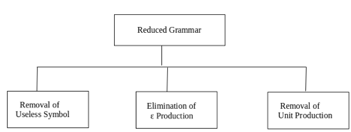

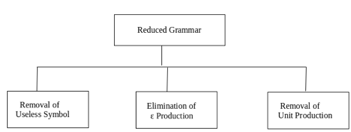

Here are the properties of reduced grammar:

- Every parameter (i.e. non-terminal) and every terminal of G occurs in the derivation of any word in L.

- As X→Y where X and Y are non-terminal, there should not be any output.

- If ε is not in the L language, then the X→ ε output does not have to be.

Lets see the simplification process

Fig 4: Simplification process

● Removal of Useless Symbols - A symbol can be useless if it does not appear on the right-hand side of the production rule and is not involved in the derivation of any string. The symbol is regarded as a symbol which is useless. Likewise, if it does not participate in the derivation of any string, a variable may be useless. That variable is called a useless symbol.

● Elimination of ε Production - The Type S → ε productions are called ε productions. Only those grammars which do not produce ε can be excluded from these types of output.

Step 1: First find out all nullable non-terminal variables which derive ε.

Step 2: Create all output A → x for each production A →a where x is extracted from a by removing one or more non-terminals from step 1.

Step 3: Now combine with the original output the outcome of step 2 and delete ε productions.

Example: Remove the production from the given CFG

- E → XYX

- X → 0X | ε

- Y → 1Y | ε

Solution:

Now, we remove the rules X → ε and Y → ε while removing ε production. In order to maintain the sense of CFG, whenever X and Y appear, we actually position ε on the right-hand side.

Lets see

If the 1st X at right side is ε, then

E → YX

Similarly, if the last X in the right hand side is ε, then

E → XY

If Y = ε, then

E → XX

If Y and X are ε then,

S → X

If both X are replaced by ε

E → Y

Now,

E → XY | YX | XX | X | Y

Now let us consider

X → 0X

If we then put ε for X on the right-hand side,

X → 0

X → 0X | 0

Similarly Y → 1Y | 1

Collectively, with the ε output excluded, we can rewrite the CFG as

E → XY | YX | XX | X | Y

X → 0X | 0

Y → 1Y | 1

● Removing unit production - Unit productions are those productions in which another non-terminal generates a non-terminal one.

To remove the production unit follow the given steps:

Step 1: To delete X → Y, when Y→ a occurs in the grammar, apply output X → a to the grammar law.

Step 2: Remove X →Y from the grammar now.

Step 3: Repeat steps 1 and 2 until all unit output is deleted.

Example:

- E → 0X | 1Y | Z

- X → 0E | 00

- Y → 1 | X

- Z → 01

Sol:

E → Z is a unit production. But while removing E → Z, we have to consider what Z gives. So, we can add a rule to E.

E → 0X | 1Y | 01

Similarly, Y → X is also a unit production so we can modify it as

Y → 1 | 0S | 00

Finally, then without unit manufacturing, we can write CFG as

E → 0X | 1Y | 01

X → 0E | 00

Y → 1 | 0S | 00

Z → 01

Key takeaway

Grammar simplification means reducing grammar by the elimination of useless symbols.

Simplification process contains three major parts.

Removal of useless symbol, Elimination of ε production , Removing unit production

Chomsky normal forms

A context free grammar (CFG) is in Chomsky Normal Form (CNF) if all production rules satisfy one of the following conditions:

A non-terminal generating a terminal (e.g.; X->x)

A non-terminal generating two non-terminals (e.g.; X->YZ)

Start symbol generating ε. (e.g.; S->ε)

Consider the following grammars,

G1 = {S->a, S->AZ, A->a, Z->z}

G2 = {S->a, S->aZ, Z->a}

The grammar G1 is in CNF as production rules satisfy the rules specified for CNF. However, the grammar G2 is not in CNF as the production rule S->aZ contains terminal followed by non-terminal which does not satisfy the rules specified for CNF.

Greibach normal forms

A CFG is in Greibach Normal Form if the Productions are in the following forms −

A → b

A → bD 1 …D n

S → ε

Where A, D 1 ,....,D n are non-terminals and b is a terminal.

Algorithm to Convert a CFG into Greibach Normal Form

Step 1 − If the start symbol S occurs on some right side, create a new start

Symbol S’ and a new production S’ → S.

Step 2 − Remove Null productions.

Step 3 − Remove unit productions.

Step 4 − Remove all direct and indirect left-recursion.

Step 5 − Do proper substitutions of productions to convert it into the proper form of

GNF.

Problem

Convert the following CFG into CNF

S → XY | Xn | p

X → mX | m

Y → Xn | o

Solution

Here, S does not appear on the right side of any production and there are no unit or

Null productions in the production rule set. So, we can skip Step 1 to Step 3.

Step 4

Now after replacing

X in S → XY | Xo | p

With

MX | m

We obtain

S → mXY | mY | mXo | mo | p.

And after replacing

X in Y → X n | o

With the right side of

X → mX | m

We obtain

Y → mXn | mn | o.

Two new productions O → o and P → p are added to the production set and then we came to the final GNF as the following −

S → mXY | mY | mXC | mC | p

X → mX | m

Y → mXD | mD | o

O → o

P → p

Key takeaway:

- CNF is a pre-processing step used in various algorithms.

- For generation of string x of length ‘m’, it requires ‘2m-1’ production or steps in CNF.

Lemma:

The language L = {anbncn | n ≥ 1} is not context free.

Proof (By contradiction)

Assuming that this language is context-free; hence it will have a context-free Grammar.

Let K be the constant of the Pumping Lemma.

Considering the string akbkck , where L is length greater than K .

By the Pumping Lemma this is represented as uvxyz , such that all uvixyiz are also in L, which is not possible, as: either v or y cannot contain many letters from {a,b,c}; else they are in the wrong order.

If v or y consists of a’s, b’s or c’s, then uv2xy2z cannot maintain the balance amongst the three letters.

Lemma:

The language L = {aibjck | i < j and i < k } is not context free.

Proof (By contradiction)

Assuming that this language is context-free; hence it will have a context-free grammar.

Let K be the constant of the Pumping Lemma.

Considering the string akbk+1ck+1 , which is L > K.

By the Pumping Lemma this must be represented as uvxyz , such that all are also In L.

-As mentioned previously neither v nor y may contain a mixture of symbols.

-Suppose consists of a’s.

Then there is no way y cannot have b’s and c’s. It generate enough letters to keep them more than that of the a’s (it can do it for one or the other of them, not both)

Similarly y cannot consist of just a’s.

-So suppose then that v or y contains only b’s or only c’s.

Consider the string uv0xy0z which must be in L . Since we have dropped both v and y, we must have at least one b’ or one c’ less than we had in uvxyz , which was akbk+1ck+1. Consequently, this string no longer has enough of either b’s or c’s to be a member of L.

References:

- John E. Hopcroft, Rajeev Motwani, Jeffrey D.Ullman, “Introduction to Automata Theory Languages and Computation”, Addison-Wesley,ISBN 0-201-44124-1

- Sanjeev Arora and Boaz Barak, “Computational Complexity: A Modern Approach”,

- Cambridge University Press, ISBN: 0521424267 97805214242643

- John Martin, “Introduction to Languages and The Theory of Computation”, 2nd Edition, McGraw Hill Education, ISBN-13: 978-1-25-900558-9, ISBN-10: 1-25-900558-5

- J.Carroll & D Long, “Theory of Finite Automata”, Prentice Hall, ISBN 0-13-913708-45

Unit - 3

Context Free Grammar

Context-free grammar (CFG) is a collection of rules (or productions) for recursive rewriting used to produce string patterns.

It consists of:

● a set of terminal symbols,

● a set of non terminal symbols,

● a set of productions,

● a start symbol.

Context-free grammar G can be defined as:

G = (V, T, P, S)

Where

G - grammar, used to generate string,

V - final set of non terminal symbols, which are denoted by capital letters,

T - final set of terminal symbols, which are denoted by small letters,

P - set of production rules, it is used for replacing the non terminal symbol of a string on both sides (left or right)

S - start symbol, which is used to derive the string

A CFG for Arithmetic Expressions -

An example grammar that generates strings representing arithmetic expressions with the four operators +, -, *, /, and numbers as operands is:

1. <expression> --> number

2. <expression> --> (<expression>)

3.<expression> --> <expression> +<expression>

4. <expression> --> <expression> - <expression>

5. <expression> --> <expression> * <expression>

6. <expression> --> <expression> / <expression>

The only non terminal symbol in this grammar is <expression>, which is also the start symbol. The terminal symbols are {+,-,*,/ , (,),number}

● The first rule states that an <expression> can be rewritten as a number.

● The second rule says that an <expression> enclosed in parentheses is also an <expression>

● The remaining rules say that the sum, difference, product, or division of two <expression> is also an expression.

Example 1:

Construct a CFG for a language with any number of a's in the set ∑= {a}.

Solution:

The regular expression for the above language is, as we know:

r.e. = a*

The following is the Regular expression's production rule:

S → aS rule 1

S → ε rule 2

We may now start with start symbols to derive the string “aaaaaa” :

S

AS

AaS rule 1

AaaS rule 1

AaaaS rule 1

AaaaaS rule 1

AaaaaaS rule 1

Aaaaaaε rule 2

Aaaaaa

The r.e. = a* can generate a set of string {ε, a, aa, aaa,.....}. We can have a null string because S is a start symbol and rule 2 gives S → ε.

Key takeaway

Context-free grammar (CFG) is a collection of rules (or productions) for recursive rewriting used to produce string patterns.

Unit - 3

Context Free Grammar

Context-free grammar (CFG) is a collection of rules (or productions) for recursive rewriting used to produce string patterns.

It consists of:

● a set of terminal symbols,

● a set of non terminal symbols,

● a set of productions,

● a start symbol.

Context-free grammar G can be defined as:

G = (V, T, P, S)

Where

G - grammar, used to generate string,

V - final set of non terminal symbols, which are denoted by capital letters,

T - final set of terminal symbols, which are denoted by small letters,

P - set of production rules, it is used for replacing the non terminal symbol of a string on both sides (left or right)

S - start symbol, which is used to derive the string

A CFG for Arithmetic Expressions -

An example grammar that generates strings representing arithmetic expressions with the four operators +, -, *, /, and numbers as operands is:

1. <expression> --> number

2. <expression> --> (<expression>)

3.<expression> --> <expression> +<expression>

4. <expression> --> <expression> - <expression>

5. <expression> --> <expression> * <expression>

6. <expression> --> <expression> / <expression>

The only non terminal symbol in this grammar is <expression>, which is also the start symbol. The terminal symbols are {+,-,*,/ , (,),number}

● The first rule states that an <expression> can be rewritten as a number.

● The second rule says that an <expression> enclosed in parentheses is also an <expression>

● The remaining rules say that the sum, difference, product, or division of two <expression> is also an expression.

Example 1:

Construct a CFG for a language with any number of a's in the set ∑= {a}.

Solution:

The regular expression for the above language is, as we know:

r.e. = a*

The following is the Regular expression's production rule:

S → aS rule 1

S → ε rule 2

We may now start with start symbols to derive the string “aaaaaa” :

S

AS

AaS rule 1

AaaS rule 1

AaaaS rule 1

AaaaaS rule 1

AaaaaaS rule 1

Aaaaaaε rule 2

Aaaaaa

The r.e. = a* can generate a set of string {ε, a, aa, aaa,.....}. We can have a null string because S is a start symbol and rule 2 gives S → ε.

Key takeaway

Context-free grammar (CFG) is a collection of rules (or productions) for recursive rewriting used to produce string patterns.

A parse tree is an entity which represents the structure of the derivation of a terminal string from some non-terminal.

● The graphical representation of symbols is the Parse tree. Terminal or non-terminal may be the symbol.

● In parsing, using the start symbol, the string is derived. The starter symbol is the root of the parse tree.

● It is the symbol's graphical representation, which can be terminals or non-terminals.

● The parse tree follows operators' precedence. The deepest sub-tree went through first. So, the operator has less precedence over the operator in the sub-tree in the parent node.

Key features to define are the root ∈ V and yield ∈ Σ * of each tree.

● For each σ ∈ Σ, there is a tree with root σ and no children; its yield is σ

● For each rule A → ε, there is a tree with root A and one child ε; its yield is ε

● If t 1 , t 2 , ..., t n are parse trees with roots r 1 , r 2 , ..., r n and respective yields y 1 , y 2 ,..., y n , and A → r 1 r 2 ...r n is a production, then there is a parse tree with root A whose children are t 1 , t 2 , ..., t n . Its root is A and its yield is the concatenation of yields: y 1 y 2 ...y n

Here, parse trees are constructed from bottom up, not top down.

The actual construction of “adding children” should be made more precise, but we intuitively know what’s going on.

As an example, here are all the parse (sub) trees used to build the parse tree for the arithmetic expression 4 + 2 * 3 using the expression grammar

E → E + T | E - T | T

T → T * F | F

F → a | ( E )

Where a represents an operand of some type, be it a number or variable. The trees are grouped by height.

Fig 1: Example of parse tree

Example:

Production rule:

- P = P + P

- P = P * P

- P = a | b | c

Input:

a * b + c

Step 1 -

Step 2 -

Step 3 -

Step 4 -

Step 5 -

Key takeaway:

In parsing, using the start symbol, the string is derived. The starter symbol is the root of the parse tree.

The graphical representation of symbols is the Parse tree. Terminal or non-terminal may be the symbol.

Suppose we have a context free grammar G with production rules:

S->aSb|bSa|SS|ɛ

Left most derivation (LMD) and Derivation Tree:

Leftmost derivation of a string from staring symbol S is done by replacing leftmost

Non-terminal symbol by RHS of corresponding production rule.

For example:

The leftmost derivation of string abab from grammar G above is done as:

S =>aSb =>abSab =>abab

The symbols in bold are replaced using production rules.

Example:

● Produle rule -

P = P + P

P = P - P

P = a | b

● Input -

a - b + a

● Leftmost derivation is:

P = P + P

P = P - P + P

P = a - P + P

P = a - b + P

P = a - b + a

Derivation tree: It explains how string is derived using production rules from S and is shown in Figure.

Fig 2: Derivation tree

Right most derivation (RMD):

It is done by replacing rightmost non-terminal symbol S by RHS of corresponding production rule.

For Example: The rightmost derivation of string abab from grammar G above

Is done as:

S =>SS =>SaSb =>Sab =>aSbab =>abab

The symbols in bold are replaced using production rules.

The derivation tree for abab using rightmost derivation is shown in Figure.

Fig 3: Right most derivation

A derivation can be either LMD or RMD or both or none.

For Example:

S =>aSb =>abSab =>abab is LMD as well as RMD But

S => SS =>SaSb =>Sab =>aSbab =>abab is RMD but not LMD.

Example:

● Produle rule -

P = P + P

P = P - P

P = a | b

● Input -

a - b + a

● Rightmost Derivation is -

P = P - P

P = P - P + P

P = P - P + a

P = P - b + a

P = a - b + a

● We can receive the same string when we use the leftmost derivation or rightmost derivation.

Parse Trees and Derivations

A derivation is a sequence of strings in V * which starts with a non-terminal in V-Σ and ends with a string in Σ * .

Let’s consider the sample grammar

E → E+E | a

We write:

E ⇒ E+E ⇒ E+E+E ⇒a+E+E⇒a+a+E⇒a+a+a

But this is incomplete, because it doesn’t tell us where the replacement rules are applied.

We actually need "marked" strings which indicate which non-terminal is replaced in all but the first and last step:

E ⇒ Ě+E ⇒ Ě+E+E ⇒a+Ě+E ⇒a+a+Ě ⇒a+a+a

In this case, the marking is only necessary in the second step; however it is crucial, because we want to distinguish between this derivation and the following one:

E ⇒ E+Ě ⇒ Ě+E+E ⇒a+Ě+E ⇒a+a+Ě ⇒a+a+a

We want to characterize two derivations as “coming from the same parse tree.”

The first step is to define the relation among derivations as being “more left-oriented at one step”. Assume we have two equal length derivations of length n > 2:

D: x 1 ⇒ x 2 ⇒ ... ⇒x n

D′: x 1 ′ ⇒ x 2 ′ ⇒ ... ⇒x n ′

Where x 1 = x 1 ′ is a non-terminal and

x n = x n ′ ∈ Σ *

Namely they start with the same non-terminal and end at the same terminal string and have at least two intermediate steps.

Let’s say D < D′ if the two derivations differ in only one step in which there are 2 non-terminals, A and B, such that D replaces the left one before the right one and D′ does the opposite. Formally:

D < D′ if there exists k, 1 < k < n such that

x i = x i ′ for all i ≠ k (equal strings, same marked position)

x k-1 = uǍvBw, for u, v, w ∈ V*

x k-1 ′ = uAvB̌w, for u, v, w ∈ V*

x k =uyvB̌w, for production A → y

x k ′ = uǍvzw, for production B → z

x k+1 = x k+1 ′ = uyvzw (marking not shown)

Two derivations are said to be similar if they belong to the reflexive, symmetric, transitive closure of <.

● A context free grammar is called ambiguous if there exists more than one LMD or RMD for a string which is generated by grammar.

● There will also be more than one derivation tree for a string in ambiguous grammar.

● The grammar described above is ambiguous because there are two derivation Trees.

● There can be more than one RMD for string abab which are:

S =>SS =>SaSb =>Sab =>aSbab =>abab

S =>aSb =>abSab =>abab

As we have included, context-free grammar can effectively represent different languages. Not all grammar is always optimized, which means that there might be some extra symbols in the grammar (non-terminal). Unnecessary change in the length of grammar with additional symbols.

Here are the properties of reduced grammar:

- Every parameter (i.e. non-terminal) and every terminal of G occurs in the derivation of any word in L.

- As X→Y where X and Y are non-terminal, there should not be any output.

- If ε is not in the L language, then the X→ ε output does not have to be.

Lets see the simplification process

Fig 4: Simplification process

● Removal of Useless Symbols - A symbol can be useless if it does not appear on the right-hand side of the production rule and is not involved in the derivation of any string. The symbol is regarded as a symbol which is useless. Likewise, if it does not participate in the derivation of any string, a variable may be useless. That variable is called a useless symbol.

● Elimination of ε Production - The Type S → ε productions are called ε productions. Only those grammars which do not produce ε can be excluded from these types of output.

Step 1: First find out all nullable non-terminal variables which derive ε.

Step 2: Create all output A → x for each production A →a where x is extracted from a by removing one or more non-terminals from step 1.

Step 3: Now combine with the original output the outcome of step 2 and delete ε productions.

Example: Remove the production from the given CFG

- E → XYX

- X → 0X | ε

- Y → 1Y | ε

Solution:

Now, we remove the rules X → ε and Y → ε while removing ε production. In order to maintain the sense of CFG, whenever X and Y appear, we actually position ε on the right-hand side.

Lets see

If the 1st X at right side is ε, then

E → YX

Similarly, if the last X in the right hand side is ε, then

E → XY

If Y = ε, then

E → XX

If Y and X are ε then,

S → X

If both X are replaced by ε

E → Y

Now,

E → XY | YX | XX | X | Y

Now let us consider

X → 0X

If we then put ε for X on the right-hand side,

X → 0

X → 0X | 0

Similarly Y → 1Y | 1

Collectively, with the ε output excluded, we can rewrite the CFG as

E → XY | YX | XX | X | Y

X → 0X | 0

Y → 1Y | 1

● Removing unit production - Unit productions are those productions in which another non-terminal generates a non-terminal one.

To remove the production unit follow the given steps:

Step 1: To delete X → Y, when Y→ a occurs in the grammar, apply output X → a to the grammar law.

Step 2: Remove X →Y from the grammar now.

Step 3: Repeat steps 1 and 2 until all unit output is deleted.

Example:

- E → 0X | 1Y | Z

- X → 0E | 00

- Y → 1 | X

- Z → 01

Sol:

E → Z is a unit production. But while removing E → Z, we have to consider what Z gives. So, we can add a rule to E.

E → 0X | 1Y | 01

Similarly, Y → X is also a unit production so we can modify it as

Y → 1 | 0S | 00

Finally, then without unit manufacturing, we can write CFG as

E → 0X | 1Y | 01

X → 0E | 00

Y → 1 | 0S | 00

Z → 01

Key takeaway

Grammar simplification means reducing grammar by the elimination of useless symbols.

Simplification process contains three major parts.

Removal of useless symbol, Elimination of ε production , Removing unit production

Chomsky normal forms

A context free grammar (CFG) is in Chomsky Normal Form (CNF) if all production rules satisfy one of the following conditions:

A non-terminal generating a terminal (e.g.; X->x)

A non-terminal generating two non-terminals (e.g.; X->YZ)

Start symbol generating ε. (e.g.; S->ε)

Consider the following grammars,

G1 = {S->a, S->AZ, A->a, Z->z}

G2 = {S->a, S->aZ, Z->a}

The grammar G1 is in CNF as production rules satisfy the rules specified for CNF. However, the grammar G2 is not in CNF as the production rule S->aZ contains terminal followed by non-terminal which does not satisfy the rules specified for CNF.

Greibach normal forms

A CFG is in Greibach Normal Form if the Productions are in the following forms −

A → b

A → bD 1 …D n

S → ε

Where A, D 1 ,....,D n are non-terminals and b is a terminal.

Algorithm to Convert a CFG into Greibach Normal Form

Step 1 − If the start symbol S occurs on some right side, create a new start

Symbol S’ and a new production S’ → S.

Step 2 − Remove Null productions.

Step 3 − Remove unit productions.

Step 4 − Remove all direct and indirect left-recursion.

Step 5 − Do proper substitutions of productions to convert it into the proper form of

GNF.

Problem

Convert the following CFG into CNF

S → XY | Xn | p

X → mX | m

Y → Xn | o

Solution

Here, S does not appear on the right side of any production and there are no unit or

Null productions in the production rule set. So, we can skip Step 1 to Step 3.

Step 4

Now after replacing

X in S → XY | Xo | p

With

MX | m

We obtain

S → mXY | mY | mXo | mo | p.

And after replacing

X in Y → X n | o

With the right side of

X → mX | m

We obtain

Y → mXn | mn | o.

Two new productions O → o and P → p are added to the production set and then we came to the final GNF as the following −

S → mXY | mY | mXC | mC | p

X → mX | m

Y → mXD | mD | o

O → o

P → p

Key takeaway:

- CNF is a pre-processing step used in various algorithms.

- For generation of string x of length ‘m’, it requires ‘2m-1’ production or steps in CNF.

Lemma:

The language L = {anbncn | n ≥ 1} is not context free.

Proof (By contradiction)

Assuming that this language is context-free; hence it will have a context-free Grammar.

Let K be the constant of the Pumping Lemma.

Considering the string akbkck , where L is length greater than K .

By the Pumping Lemma this is represented as uvxyz , such that all uvixyiz are also in L, which is not possible, as: either v or y cannot contain many letters from {a,b,c}; else they are in the wrong order.

If v or y consists of a’s, b’s or c’s, then uv2xy2z cannot maintain the balance amongst the three letters.

Lemma:

The language L = {aibjck | i < j and i < k } is not context free.

Proof (By contradiction)

Assuming that this language is context-free; hence it will have a context-free grammar.

Let K be the constant of the Pumping Lemma.

Considering the string akbk+1ck+1 , which is L > K.

By the Pumping Lemma this must be represented as uvxyz , such that all are also In L.

-As mentioned previously neither v nor y may contain a mixture of symbols.

-Suppose consists of a’s.

Then there is no way y cannot have b’s and c’s. It generate enough letters to keep them more than that of the a’s (it can do it for one or the other of them, not both)

Similarly y cannot consist of just a’s.

-So suppose then that v or y contains only b’s or only c’s.

Consider the string uv0xy0z which must be in L . Since we have dropped both v and y, we must have at least one b’ or one c’ less than we had in uvxyz , which was akbk+1ck+1. Consequently, this string no longer has enough of either b’s or c’s to be a member of L.

References:

- John E. Hopcroft, Rajeev Motwani, Jeffrey D.Ullman, “Introduction to Automata Theory Languages and Computation”, Addison-Wesley,ISBN 0-201-44124-1

- Sanjeev Arora and Boaz Barak, “Computational Complexity: A Modern Approach”,

- Cambridge University Press, ISBN: 0521424267 97805214242643

- John Martin, “Introduction to Languages and The Theory of Computation”, 2nd Edition, McGraw Hill Education, ISBN-13: 978-1-25-900558-9, ISBN-10: 1-25-900558-5

- J.Carroll & D Long, “Theory of Finite Automata”, Prentice Hall, ISBN 0-13-913708-45