Unit 1

Matrices

- Definition:

An arrangement of m.n numbers in m rows and n columns is called a matrix of order mxn.

Generally, a matrix is denoted by capital letters. Like, A, B, C, ….. Etc.

2. Types of matrices:- (Review)

- Row matrix

- Column matrix

- Square matrix

- Diagonal matrix

- Trace of a matrix

- The determinant of a square matrix

- Singular matrix

- Non – singular matrix

- Zero/ null matrix

- Unit/ Identity matrix

- Scaler matrix

- Transpose of a matrix

- Triangular matrices

Upper triangular and lower triangular matrices,

14. Conjugate of a matrix

15. Symmetric matrix

16. Skew – symmetric matrix

3. Operations on matrices:

- Equality of two matrices

- Multiplication of A by a scalar k.

- Addition and subtraction of two matrices

- Product of two matrices

- The inverse of a matrix

4. Elementary transformations

a) Elementary row transformation

These are three elementary transformations

- Interchanging any two rows (Rij)

- Multiplying all elements in ist row by a non – zero constant k is denoted by KRi

- Adding to the elements in an ith row by the kth multiple of the jth row is denoted by

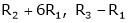

.

.

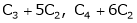

b) Elementary column transformations:

There are three elementary column transformations.

- Interchanging ith and jth column. Is denoted by Cij.

- Multiplying ith column by a non – zero constant k is denoted by kCj.

- Adding to the element of the ith column by the kth multiple of the jth column is denoted by Ci + kCj.

The rank of a matrix:

Let A be a given rectangular matrix ofa square matrix. From this matrix select, any r rows from these r rows select any r columns thus getting a square matrix of order r x r. The determinant of this matrix of order r x r is called a minor of order r.

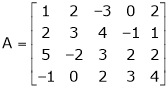

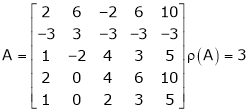

e.g.

If

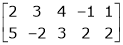

For example, select the 2nd and 3rd row. i.e.

Now select any two columns. Suppose 1st and 2nd.

i.e.

Invariance of rank through elementary transformations.

- The rank of the matrix remains unchanged by elementary transformations. i.e. from a matrix. We get another matrix B by using some elementary transformation. Then

The rank of A = Rank of B

2. Equivalent matrices:

The matrix B is obtained from a matrix A by a sequence of a finite no. Of elementary transformations is said to be equivalent to A. And we write.

Normal form or canonical form:

Every mxn matrix of rank r can be reduced to the form

By a finite sequence of elementary transformation. This form is called the normal form or the first canonical form of the matrix A.

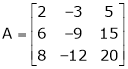

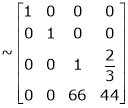

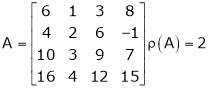

Ex. 1

Reduce the following matrix to the normal form of Hence find it’s rank,

Solution:

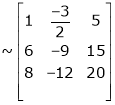

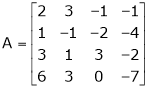

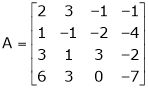

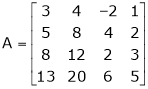

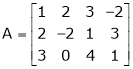

We have,

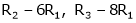

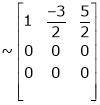

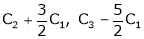

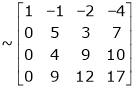

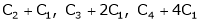

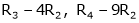

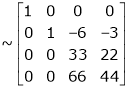

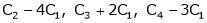

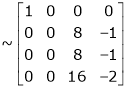

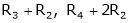

Apply

The rank of A = 1

The rank of A = 1

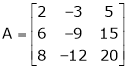

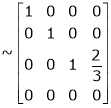

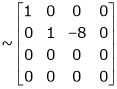

Ex. 2

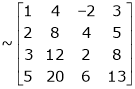

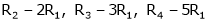

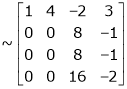

Find the rank of the matrix

Solution:

We have,

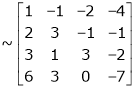

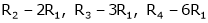

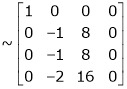

Apply R12

The rank of A = 3

The rank of A = 3

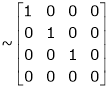

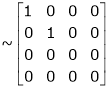

Ex. 3

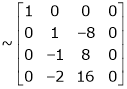

Find the rank of the following matrices by reducing it to the normal form.

Solution:

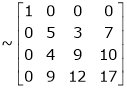

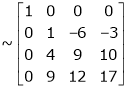

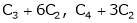

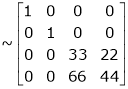

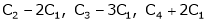

Apply C14

H.W.

Reduce the follo9wing matrices into the normal form and hence find their ranks.

a)

b)

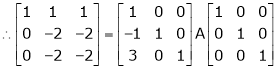

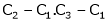

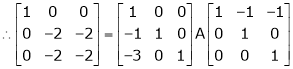

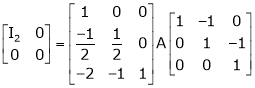

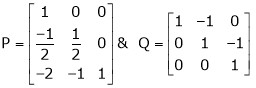

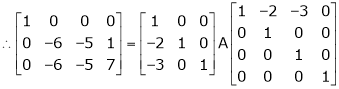

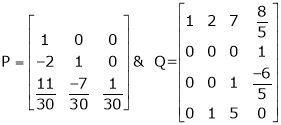

5. Reduction of a matrix a to normal form PAQ.

If A is a matrix of rank r, then there exists a non – singular matrices P & Q such that PAQ is in normal form.

i.e.

To obtain the matrices P and Q we use the following procedure.

Working rule:-

- If A is anmxn matrix, write A = Im A In.

- Apply row transformations on A on l.h.s. And the same row transformations on the prefactorIm.

- Apply column transformations on A on l.h.s and the column transformations on the postfactor In.

So that A on the l.h.s. Reduces to normal form.

Example 1

If  Find Two

Find Two

Matrices P and Q such that PAQ is in normal form.

Solution:

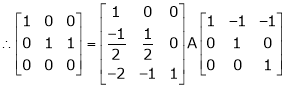

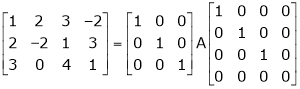

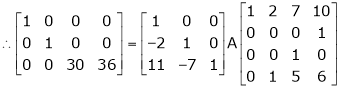

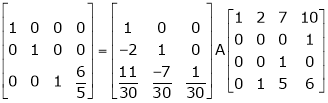

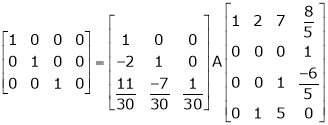

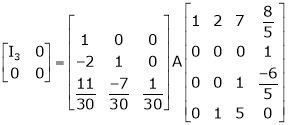

Here A is a square matrix of order 3 x 3. Hence, we write,

A = I3 A.I3

i.e.

i.e.

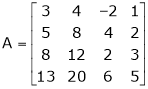

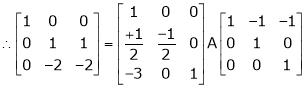

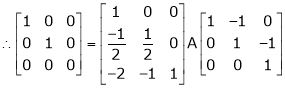

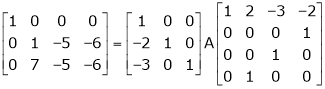

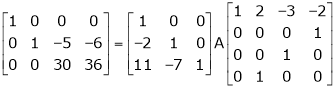

Example 2

Find a non – singular matrices p and Q such that P A Q is in a normal form where

Solution:

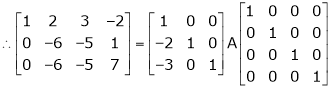

Here A is a matrix of order 3 x 4. Hence, we write A as,

i.e.

i.e.

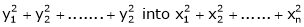

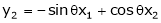

A linear transformation  is said to orthogonal if it transforms

is said to orthogonal if it transforms  .

.

The matrix of an orthogonal transfer motion is called on orthogonal matrix.

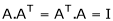

Definition:

A square matrix ‘A’ is said to be orthogonal if

Note:-

, for an orthogonal transformation

, for an orthogonal transformation- If ‘A’ is orthogonal then A-1, AT are also orthogonal.

- If a is orthogonal,

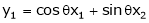

Ex.

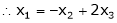

- Show that the transformation

is orthogonal.

is orthogonal.

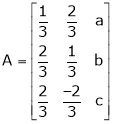

2. If  is orthogonal, find a, b, c.

is orthogonal, find a, b, c.

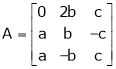

Ex. Determine the values of a, b, c when

is orthogonal.

is orthogonal.

Eigenvalues and Eigenvectors

Introduction:

In this chapter we are going to study a very important theorem viz first we have to study eigenvalues and eigenvector.

- Vector

An ordered n-tuple of numbers is called an n – vector. Thus the ‘n’ numbers x1, x2, ………… xn took in order denotes the vector x. i.e. x = (x1, x2, ……., xn).

Where the numbers x1, x2, ……….., xn are called component or coordinates of a vector x. A vector may be written as a row vector or a column vector.

If A be anmxn matrix then each row will be an n – vector & each column will be an m – vector.

2. Linear Dependence

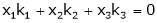

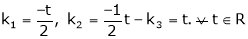

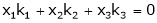

A set of n – vectors. x1, x2, …….., xr is said to be linearly dependent if there exist scalars. k1, k2, ……., kr not all zero such that

k1 + x2k2 + …………….. + xrkr = 0 … (1)

3. Linear Independence

A set of r vectors x1, x2, …………., xr is said to be linearly independent if there exist scalars k1, k2, …………, kr all zero such that

x1 k1 + x2 k2 + …….. + xrkr = 0

Note:-

- Equation (1) is known as a vector equation.

- If the vector equation has non – zero solution i.e. k1, k2, …., kr not all zero. Then the vector x1, x2, ………. xr are said to be linearly dependent.

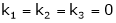

- If the vector equation has only a trivial solution i.e.

k1 = k2 = …….= kr = 0. Then the vector x1, x2, ……, xr are said to linearly independent.

4. Linear combination

A vector x can be written in the form.

x = x1 k1 + x2 k2 + ……….+xrkr

Where k1, k2, ………….., kr are scalars, then X is called linear combination of x1, x2, ……, xr.

Results:

- A set of two or more vectors are said to be linearly dependent if at least one vector can be written as a linear combination of the other vectors.

- A set of two or more vector is said to be linearly independent then no vector can be expressed as a linear combination of the other vectors.

Example 1

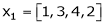

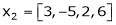

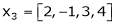

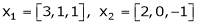

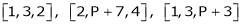

Are the vectors  ,

,  ,

,  linearly dependent. If so, express x1 as a linear combination of the others.

linearly dependent. If so, express x1 as a linear combination of the others.

Solution:

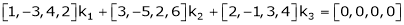

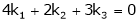

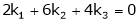

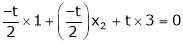

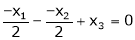

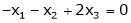

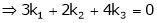

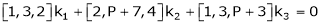

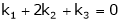

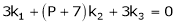

Consider a vector equation,

i.e.

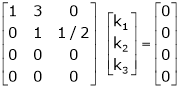

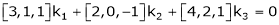

Which can be written in matrix form as,

Here  & no. Of unknown 3. Hence the system has infinite solutions. Now rewrite the questions as,

& no. Of unknown 3. Hence the system has infinite solutions. Now rewrite the questions as,

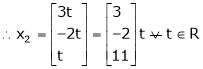

Put

and

and

Thus

i.e.

i.e.

Since F11 k2, k3 not all zero. Hence  are linearly dependent.

are linearly dependent.

Example 2

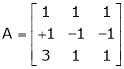

Examine whether the following vectors are linearly independent or not.

and

and  .

.

Solution:

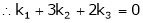

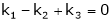

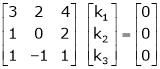

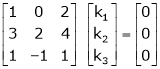

Consider the vector equation,

i.e.  … (1)

… (1)

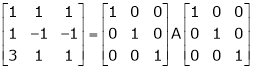

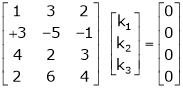

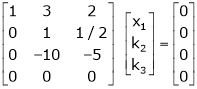

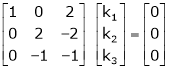

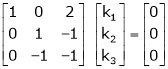

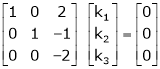

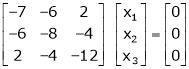

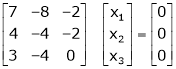

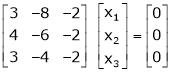

Which can be written in matrix form as,

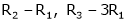

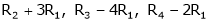

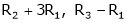

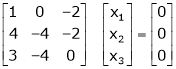

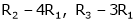

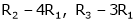

R12

R2 – 3R1, R3 – R1

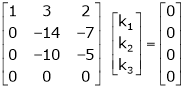

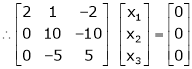

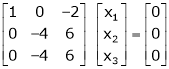

R3 + R2

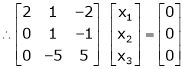

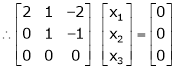

Here Rank of the coefficient matrix is equal to the no. Of unknowns. i.e. r = n = 3.

Hence the system has a unique trivial solution.

i.e.

i.e. vector equation (1) has an only trivial solution. Hence the given vectors x1, x2, x3 are linearly independent.

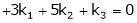

Example 3

At what value of P the following vectors are linearly independent.

Solution:

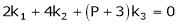

Consider the vector equation.

i.e.

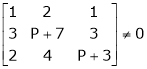

Which is a homogeneous system of three equations in 3 unknowns and has a unique trivial solution.

If and only if Determinant of the coefficient matrix is non zero.

consider

consider  .

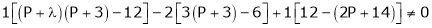

.

.

.

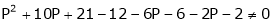

i.e.

Thus for  the system has an only trivial solution and Hence the vectors are linearly independent.

the system has an only trivial solution and Hence the vectors are linearly independent.

Note:-

If the rank of the coefficient matrix is r, it contains r linearly independent variables & the remaining vectors (if any) can be expressed as a linear combination of these vectors.

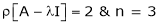

Characteristic equation:-

Let A be a square matrix,  be any scaler then

be any scaler then  is called a characteristic equation of a matrix A.

is called a characteristic equation of a matrix A.

Note:

Let a be a square matrix and ‘ ’ be any scaler then,

’ be any scaler then,

1)  is called a characteristic matrix

is called a characteristic matrix

2)  is called a characteristic polynomial.

is called a characteristic polynomial.

The roots of characteristic equations are known as characteristic root or latent roots, eigenvalues, or proper values of a matrix A.

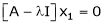

Eigenvector:-

Suppose  be an eigenvalue of a matrix A. Then

be an eigenvalue of a matrix A. Then  a non – zero vector x1 such that.

a non – zero vector x1 such that.

… (1)

… (1)

Such a vector ‘x1’ is called as eigenvector corresponding to the eigenvalue  .

.

Properties of Eigenvalues:-

- Then the sum of the eigenvalues of a matrix A is equal to the sum of the diagonal elements of a matrix A.

- The product of all eigenvalues of a matrix A is equal to the value of the determinant.

- If

are n eigenvalues of square matrix A then

are n eigenvalues of square matrix A then  is m eigenvalues of a matrix A-1.

is m eigenvalues of a matrix A-1. - The eigenvalues of a symmetric matrix are all real.

- If all eigenvalues are non – zen then A-1 exists and conversely.

- The eigenvalues of A and A’ are the same.

Properties of eigenvector:-

- The eigenvector corresponding to distinct eigenvalues is linearly independent.

- If two are more eigenvalues are identical then the corresponding eigenvectors may or may not be linearly independent.

- The eigenvectors corresponding to distinct eigenvalues of a real symmetric matrix are orthogonal.

Example 1

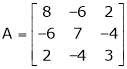

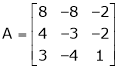

Determine the eigenvalues of the eigenvector of the matrix.

Solution:

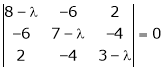

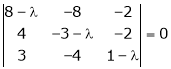

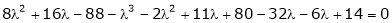

Consider the characteristic equation as

i.e.

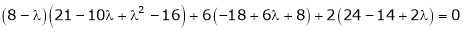

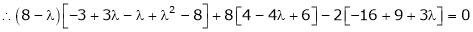

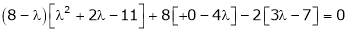

i.e.

i.e.

Which is the required characteristic equation.

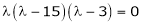

are the required eigenvalues.

are the required eigenvalues.

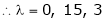

Now consider the equation

… (1)

… (1)

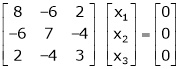

Case I:

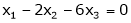

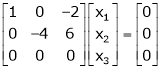

If  Equation (1) becomes

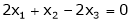

Equation (1) becomes

R1 + R2

Thus

independent variable.

independent variable.

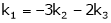

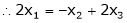

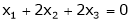

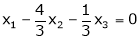

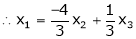

Now rewrite the equation as,

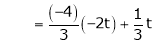

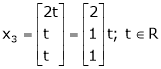

Put x3 = t

&

&

Thus  .

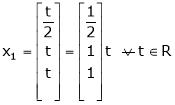

.

Is the eigenvector corresponding to  .

.

Case II:

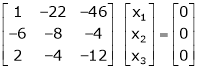

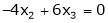

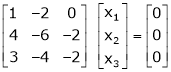

If  equation (1) becomes,

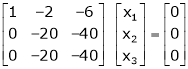

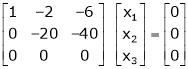

equation (1) becomes,

Here

independent variables

independent variables

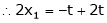

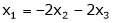

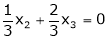

Now rewrite the equations as,

Put

&

&

.

.

Is the eigenvector corresponding to  .

.

Case III:

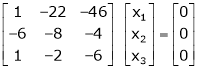

If  equation (1) becomes,

equation (1) becomes,

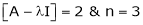

Here the rank of

independent variable.

independent variable.

Now rewrite the equations as,

Put

Thus  .

.

Is the eigenvector for  .

.

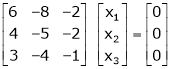

Example 2

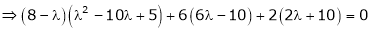

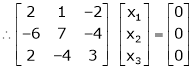

Find the eigenvalues of an eigenvector for the matrix.

Solution:

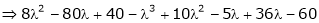

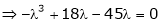

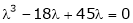

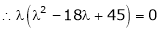

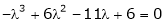

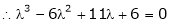

Consider the characteristic equation as

i.e.

i.e.

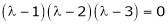

are the required eigenvalues.

are the required eigenvalues.

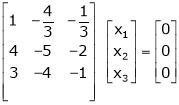

Now consider the equation

… (1)

… (1)

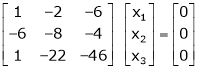

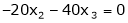

Case I:

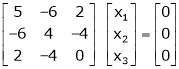

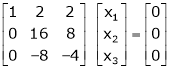

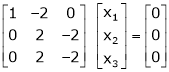

Equation (1) becomes,

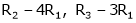

Equation (1) becomes,

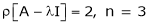

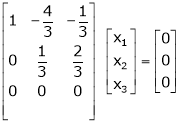

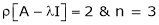

Thus  and n = 3

and n = 3

3 – 2 = 1 independent variables.

3 – 2 = 1 independent variables.

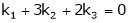

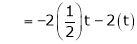

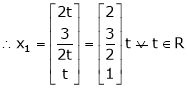

Now rewrite the equations as,

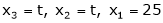

Put

,

,

i.e. the eigenvector for

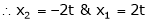

Case II:

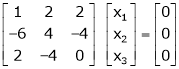

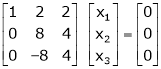

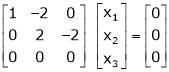

If  equation (1) becomes,

equation (1) becomes,

Thus

Independent variables.

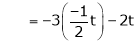

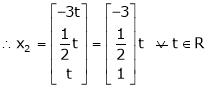

Now rewrite the equations as,

Put

Is the eigenvector for

Now

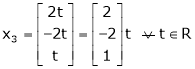

Case II:-

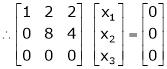

If  equation (1) gives,

equation (1) gives,

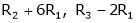

R1 – R2

Thus

independent variables

independent variables

Now

Put

Thus

Is the eigenvector for  .

.

Reference Book:

1. G.B. Thomas and R.L. Finney, Calculus and Analytic geometry, 9th Edition, Pearson,Reprint, 2002.

2. Erwin kreyszig, Advanced Engineering Mathematics, 9th Edition, John Wiley &Sons,2006.

3. Veerarajan T., Engineering Mathematics for the first year, Tata McGraw-Hill, NewDelhi,2008.

4. Ramana B.V., Higher Engineering Mathematics, Tata McGraw Hill New Delhi, 11thReprint, 2010.

5. D. Poole, Linear Algebra: A Modern Introduction, 2nd Edition, Brooks/Cole, 2005.

6. B.S. Grewal, Higher Engineering Mathematics, Khanna Publishers, 36th Edition, 2010